Observations on ‘The Search for Technosignatures: A Review of Possibilities’ 5 Jun 10:42 AM (4 days ago)

I don’t usually post comments at the top of the site, but I’m making an exception here for a couple of reasons. The recent paper I reviewed by Clément Vidal and colleagues covering technosignatures and strategies for detection is a significant work, the kind of consolidation of resources the field needs as the original radio and optical-oriented SETI expands into new realms. We now have options calibrated for intelligence via archival and observational detection of megastructures, planetary or stellar engineering, or other projects far beyond our own level of technology. Dean Zierman’s thoughts on the Vidal paper open a number of issues and highlight assumptions we’ll always need to examine. Dean is a telecommunications expert specializing in radio frequency communications, one who has been deployed to over 150 disasters and dangerous events including earthquakes, hurricanes, tsunamis and the 9/11 attacks on the United States. He has served as a subject matter expert on communications in hostile or austere environments for multiple agencies and organizations. Herewith his thoughts on the technosignature hunt and the recent review paper, which I hope will feed further energy into our conversation.

by Dean Zierman

Observations in relation to this paper. I’ll be quoting frequently from the document.

“Another limitation is the meaning of the lifetime L of a civilization. What does it mean for an interstellar civilization seeding life or colonies, or for galactic colonization models? Some colonies might go extinct, while others could transform so much that the link with parent civilization or others would be lost.”

This does not consider how long a specific technology lasts.

It also does not consider how different types of technology interact with each other or with civilization. To be fair, these ideas have not yet been covered in the literature.

“This galactic and stellar context shows that there is ample time for advanced civilizations to have explored the galaxy systematically.”

“It means that there could have been up to 4500 opportunities for visitation by one single spacefaring civilization over the lifetime of the Earth.”

The windowing issue remains unaddressed. Specifically, it is necessary to determine the duration during which an advanced civilization might explore or be detectable within the Earth’s solar system, as well as the period during which an Earth based civilization has existed in the solar system. It is also important to assess whether there is any temporal overlap between these two windows.

“Even before searching for traces of past extraterrestrial visitation, we can ask whether we are the first advanced civilization in the geological history of Earth. It turns out that traces of a civilization –even one going through an anthropocene phase– would be very challenging to detect because of erosion dynamics such as surface weathering or plate tectonics. These constraints are discussed through the Silurian hypothesis (Schmidt and Frank 2019), and the authors conclude that artefacts or fossilized examples of a population older than 4 million years (Ma) would be unlikely to be found.”

“Still, what could be searched for in this 4 Ma window? Here are a few examples. We could look for evidence of large scale agriculture that would have led to a disruption of the soil nitrogen cycle. Another line of research could look for evidence of mining, such as anomalous geological structures 7 that would be indicative of large mining operations.”

This suggests that on Earth, a civilization eventually produces enough pollution to be noticed. In the past 12,000 years, about 70 civilizations have collapsed.

These might be the same technological signs we could detect on planets outside our solar system.

“According to Schmidt and Frank (2019), all of the pollution of the anthropocene would fit within 1 cm of sediment layer, which makes sense given how short our industrial civilization has existed on geological timescales. This explains why even if there was a pre-human civilization which went through its own anthropocene, we might not have noticed it in our sediment analysis yet, while also leaving open the possibility that we could discover such a layer in the future.”

Over the last 12,000 years, only a few have reached a level where they may have produced detectable pollutants. Of those, only the most recent may have caused changes large enough to be detected in isotope ratios or radioactive isotope production.

“This makes sense if we look at the Barrow scale that proposes that civilizations progress by increasing their ability to manipulate, manufacture, and control smaller and smaller scales (see Barrow 1998, Vidal 2014, and the Barrow scale section 4.1.0).”

Another way to look at this is that as a civilization’s ability to manipulate and manufacture at smaller scales increases, it becomes less necessary to do the same thing at larger scales. This smaller scale also means that what is built at a larger scale is likely much more efficient and harder to detect at interstellar distances.

“Although there are reports of unidentified flying objects (UFO) dating back over millennia (Stothers 2007), the first modern sighting to popularize UFOs was reported by Kenneth Arnold in 1947:”

“How many reports are really unidentified or anomalous? Out of 12,000 reports analyzed in Project Blue Book, 6% of them remained unexplained. How are UAP reports categorized? 90%-95% end up as (1) explained phenomenon. The remaining 5-10% of reports could end up (2) unexplainable due to lack of credible data. Those that do have credible data imply either (3) an unknown physical mechanism, or (4) an unknown manifestation of extraterrestrial intelligence (see Fig. 4).”

This would seem to be a straightforward method for looking for UFOs, or the modern term UAPs. Unfortunately, due to the rise of our own technology, the noise level is rising exponentially faster than our ability to screen it out of any possible signal. This is similar to the phenomenon of using radio to look for SETI. Given that we are generating UAPs with our own technology, the technology we’re using is also generating more noise.

It has been observed, somewhat jokingly, that the resolution of our digital cameras has greatly increased, leading one to believe that the resulting pictures of UAPs would be much clearer and thus more definitive. What has happened is that we have increased our ability, with the increase in the number of possible detectors, to detect more UAPs, which are also at the edge of their detection resolution. In short, we have many more fuzzy, questionable photos.

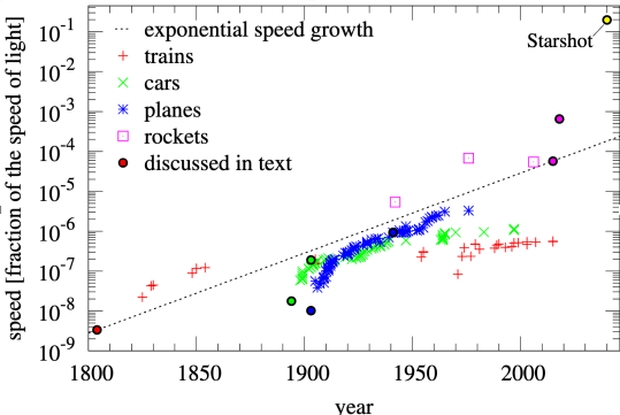

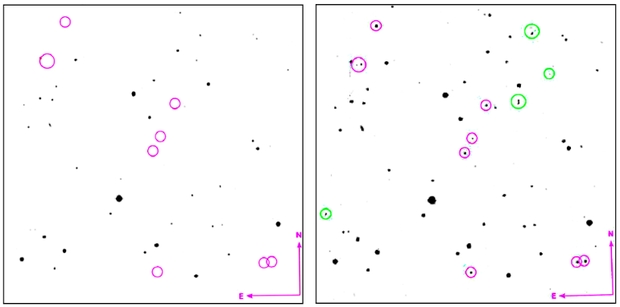

“Villarroel et al. (2021, 2022a) conducted an analysis of 1950s archival photographic plates from the Palomar Observatory Sky Surveys (POSS-I, 1948-1958 and POSS-II, 1980s-1990s).”

This is another example of our technology generating noise that is becoming impossible to filter out. If it weren’t for all the “stuff” we have sent into space, this would be a straightforward and definitive technique. But we have already FUBARed this to a near hopeless extent. The latest ground-based telescopes coming online should be able to detect these objects if they existed. Oops, I guess that’s not happening.

An idea occurs to me. The massive array of Starlink satellites has started using their star trackers to look for other satellites above them and track them for collision avoidance. It might be possible for someone to obtain copies of this data and, using modern algorithms, identify satellites in much higher orbits that are ignored for tracking, and that could be ETI satellites’ orbits.

“To sum up, many present and future observational facilities such as the Vera Rubin Observatory or the Nancy Grace Roman Space Telescope as well as planned missions in the solar systems that together with machine learning techniques have the potential to enable a much more systematic and comprehensive search for anomalies and possibly artefacts in our own solar system (see also Haqq-Misra et al. 2022c).”

Almost definitionally the search for anomalies is the 1st step in the scientific process. So just searching for anomalies should be considered an adequate scientific reason to perform many of the studies discussed.

The issue with the Kardashev scale, and even more so with the Barrow scale, is that they do not focus on what a civilization is trying to achieve with its technology. For example, in Larry Niven’s novel The Ring World Engineers, a civilization spends vast resources and time building a single massive structure. But do they really need something that big? Another problem is that you can’t use the structure until it’s finished. Since it’s just one structure, everything depends on it, so if it fails, everything is lost. It’s like putting all your eggs in one basket. If the goal is just to have more space, wouldn’t it be smarter to build several Banks orbitals? Or maybe it would make more sense to create a lot of O’Neill cylinders inside asteroids. These cylinders could even be used as slow ships to travel to other star systems.

The whole question of Big Dumb Objects begs the question of why build a BDO? Almost anything you could think of as a reason to build them, you could do much better with other technology. BDO’s then become the ultimate SETI MacGuffin. Just because somebody can build something doesn’t mean that they would build it, especially when there are better ways to accomplish the same thing. All of this leads one to speculate that more advanced civilizations would not appear high on the K scale but would be much higher on the B scale, with smaller, very efficient, and dispersed objects with low radiation indexes that are hard to find. This just means we should be looking for smaller, darker objects rather than the big, bright, flashing ones.

4.2. Surface Technosignatures

4.3. Atmospheric Technosignatures

4.4. Orbital Technosignatures

These sections, in my opinion, show the paper’s biggest limitation. The information is technically correct but lacks a clear framework for how technology creates civilizations that then modify technologies, which in turn change civilizations. It misses an important time and scale element.

As I mentioned above, civilizations and their interdependent technologies have risen and fallen. The vulnerability of civilizations and technology to collective and cumulative risks creates a myopic view of the detectability of these technologies in both time and scale. For 10,000 years, you would’ve been looking for just fire on earth, for example. How would you be able to tell the difference between civilization and a natural phenomenon?

As technology advances, detection actually becomes harder. For example, we once had FM radio stations that broadcast in all directions with over a megawatt of power in the hundred megahertz range. This created a window of about 60 to 80 years when this technology could be detected. Now, we use higher frequency cellular systems that offer two way communication for many more people at much higher data rates. Instead of a few powerful narrowband transmitters that could be picked up with large antennas (perhaps on the backside of the moon), we now have many low power wideband transmitters. These blend into the background noise, making them much harder to detect.

Many technologies can be detected during their rise and fall, sometimes even within the short lifespan of a single civilization. One example not covered in the paper is the development of laser communications between satellites and ground stations. Although this technology is just emerging, it is unclear how long we will remain detectable. Among the technologies discussed, this type of laser communications may offer the best chance to find evidence of technological activity. It could be the easiest to detect and might be picked up by current or future detectors. It may also have the longest detectability period and the largest scale compared to the other technologies mentioned.

4.2. Surface Technosignatures

4.3. Atmospheric Technosignatures

Many of these might have such short detection windows that they approach the probability asymptote.

4.4. Orbital Technosignatures

4.5. Exoplanetary System Technosignatures

4.6. Multiplanetary Systems and Terraforming

5. Stellar Technosignatures

This brings up concepts the same as BDO’s becoming more SETI MacGuffins.

SETI MacGuffins with low detection windows that approach the probability asymptote.

6. Interstellar Technosignatures

I found this section to be the most interesting and comprehensive. That’s not surprising, as this is the field I’m most familiar with, other than resisting the fall of civilization: communications.

Although the authors were very comprehensive, I did notice two aspects of communication they did not discuss. The mentioned modulation schemes, but they did not mention encoding or spread-spectrum schemes. These are actually related. For example, you could use a frequency-hopping system to spread your data over a wider bandwidth, which can give you some advantages in certain propagation conditions. You could also use direct-sequence spread spectrum, which has its own advantages, and you can combine the two.

The authors also mentioned at the beginning that it was assumed that all communications would be essentially noiseless. In reality, that doesn’t exist. All modern communication systems either have inherent resistance to this noise or incorporate some form of error correction into the modulated data. This could be bidirectional error correction, which is what is used mostly on the Internet, for example, but would be impractical at interstellar distances, or more likely, some form of forward error correction.

I am a little disappointed but not surprised that they did not raise the issue of toxic information, as that concept is the antithesis of most scientists’ ideologies.

7. Travel Technosignatures

Although interstellar travel is often downplayed, aside from the time window problem, it might be the most likely technical signature to be detected. Sadly, it is usually dismissed as impossible by those who want to show their supposed intelligence over others’ ignorance. This mostly shows them cherry-picking facts and lacking imagination. The time window problem is discussed further up, as in when they have visited while we could also observe them. In this particular case, it’s when they are traveling that we can observe them.

“In comparison to chemical rockets, a nuclear fission source of energy is ∼ 105 more efficient, a nuclear fusion ∼ 106 and the absolute theoretical maximum, matter-antimatter annihilation is ∼ 108 times more efficient (Mallove and Matloff 1989). This means that crossing the threshold from chemical rockets to nuclear fission propulsion leads to a gain of 5 orders of magnitude in efficiency, while going from fusion to matter-antimatter means ’only’ gaining 3 orders of magnitude.”

I hadn’t come across this fact before, and it’s pretty interesting. It suggests that interstellar travel might not require matter-antimatter after all. Maybe it could be done with technology we already have or are close to developing.

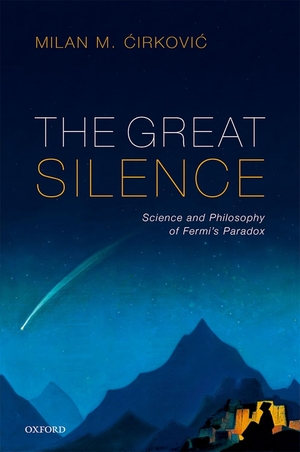

I wish the authors had extended the year scale further. That way, it would be easier to see where the Starshot project fits on the growth line.

“As Heller (2017) noted, reaching 0.1c would not happen before 150 years from now, assuming this exponential growth continues unabated. In that sense, the project might have been a few centuries ahead of its time!”

I have some doubts about Heller’s projection. It assumes that a project like Starshot would face the same energy limits as missions that are restricted by the rocket equation.

“In the case of directed energy, Lubin (2016) proposed a general search for directed energy beaming activities, and Guillochon and Loeb (2015) proposed to look for leakage from a light sail spacecraft traveling between planets of a given stellar system. This search can be done in synergy with optical laser SETI searches. However, note that if the beam matches perfectly with the size of the sail, then there is no leakage to detect, so we would be looking for leakage from a system designed to minimize it, which may be hard.”

This idea assumes that leakage only happens in the forward direction. However, a system like this would actually have a lot of leakage in the backscatter, since the light needs to go that way for the system to function. It could also be harder to detect if the launch trajectory is directly opposite the launching star system.

7.4. Ramjet

7.5. Planet engines

7.6. Stellar engines

7.7. Newtonian gravitation for propulsion

More SETI MacGuffins.

7.8. Spacetime manipulation for propulsion Spacetime – Bubble Propulsion System -Traversable Wormholes

These ideas might turn out to be possible one day, but right now they are beyond what physics can explain. Since they do not fit into our current knowledge, guessing whether we could ever detect them is just speculation. For now, they are as unlikely as Harry Potter’s magic wand. Still, it is fun to imagine and share stories about them. After all, dreaming about the impossible has sometimes helped us turn fantasy into reality.

8. Galactic and Beyond

“Given the tremendous distances involved, the magnitude of energy usage that could feasibly be observed by astronomers here on Earth would have to be immense, implying that such technosignatures would have to be produced by Type III civilizations or beyond.”

This puts the whole concept firmly in the realm of SETI MacGuffins with low detection windows that approach the probability asymptote. If a civilization like that existed, we wouldn’t need to search for them because we would already be part of it.

8.4. The Simulation Hypothesis

In the end, all of this is really about the idea of living in a dream. This is more of a philosophical view, since if reality were a simulation, we could only notice it if the simulation let us.

9. Discussion

9.1. Biosignatures and technosignatures

“Technological fossils—traces of a previous civilization on Earth (see Section 2)—or technological trash, such as inactive, broken probes in our solar system, broken Dyson spheres (Loeb 2023), and as Holmes (1991) noted more generally, rubbish, debris, defunct equipment, and defunct spacecraft are also potential technosignatures. For attempts to quantify this longevity factor of technosignatures, see Lingam and Loeb (2019) and Ćirković et al. (2019).”

This might be our best chance to find signs of previous advanced civilizations on earth. It is also among the best chances to find ETI. The chances of finding anything on earth due to geology and environmental factors become vanishingly small as you approach deep time.

“This is a blind spot in traditional natural sciences that seeks to study causal effects in a detached and objective way, and thus neglects or avoids the complexities of modelling agents (see Frank et al. 2024).”

“Thomas Kuhn (1996), who wrote in his foundational The Structure of Scientific Revolutions: “If all members of a community responded to each anomaly as a source of crisis or embraced each new theory advanced by a colleague, science would cease. If, on the other hand, no one reacted to anomalies or to brand-new theories in high-risk ways, there would be few or no revolutions.”

This issue affects all areas of science. Science operating as a business rather than a method often discourages ideas that challenge current thinking. As a result, the business side of science is a major reason our understanding of the world has not progressed much in the last 50 years and is becoming stagnant.

This section offers useful information on different ways to detect anomalies. In the end, finding ETI anomalies among all the noise will probably be the most important part of SETI.

“Arguably the ‘purest’ approach to signal analysis involves the use of Turing machines (Turing 1937) that represent the most general and universal of all computational devices.”

Science fiction

“The role of imagination is key to the scientific process. The core difference between science and science fiction is that science fiction aims to create emotional and engaging stories for human entertainment, while science tries to gain new insights, knowledge, and understanding, highly constrained by its methods and criteria. A systematic study of major science fiction novels to derive technosignature strategies would be worthwhile, although outside the scope of this paper. There is a rich interplay and synergy between science and science fiction (see Nováková et al. 2023): many new ideas start in science fiction and inspire scientists, while new scientific theories and discoveries inspire hard science fiction authors. However, science fiction is a double-edged sword for academic SETI. On the negative side, it contributes to the “giggle factor,” creating implicit associations between entertainment and serious science. On the positive side, science fiction addresses the question of extraterrestrial life and intelligence, which is so popular and fascinating that it is a huge opportunity for science education and outreach to draw people of all ages towards science.”

Scientists need to get over themselves and leave their ivory towers. The ivory towers are not reality, and they must stop hiding behind the business of science. This is where Carl Sagan excelled and did more than anyone before or since to draw people of all ages toward science. They need to build real world baloney detectors as Carl Sagan advised, not ones based on the business of science or their view from ivory towers. With a real world baloney detector, they would be equipped to understand and distinguish between something to giggle at and something to investigate.

Evolving Strategies in the Search for Extraterrestrial Civilizations 30 May 3:46 AM (10 days ago)

Looking for extraterrestrial life in the form of biosignatures will involve peering into the constituents of a planetary atmosphere and identifying out of equilibrium gases that tell us something biological is going on. But here’s the problem, as demonstrated recently in work on the exoplanet K2-18b. We’ve identified dimethyl sulfide at this world, which might just be a life detection. On Earth, dimethyl sulfide comes from dimethylsulfoniopropionate (DMSP), a compound that is produced by phytoplankton and has a clear role to play in marine ecosystems. The problem is that subsequent research on K2-18b has pointed to possible instrumental errors and the relatively low level of statistical confidence in the detection.

Nothing turned up in a SETI check of this world with the Very Large Array (New Mexico) and MeerKAT (South Africa, precursor to the Square Kilometre Array), which isn’t particularly surprising. We’re going to be getting more biosignature candidates in the coming decades as our instrumentation keeps improving, but even with the Habitable Worlds Observatory, the result of any interesting detection is going to be a race to figure out ways to produce the same gases without biology. Microbial life may be all over the galaxy (I suspect that it is), but I’m less and less sure we’re going to get any biosignature detections that anyone will consider ironclad this way.

Emergence of the Technosignature

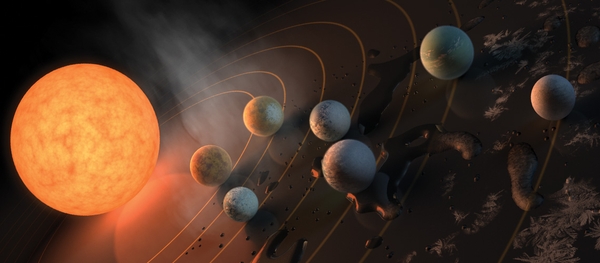

That makes technosignatures more interesting than ever, especially since there is a good case to be made that any civilization we detect will be substantially older than ourselves, and thus gifted with technological powers we may not be able to imagine. Astroengineering is but one wonderful example of what we might encounter, but would we recognize it? Asking the same about vast structures like Dyson spheres or swarms is a hot topic because we do have current tools for observing them, and also archival data that may just contain evidence of them. But we have to know what to look for.

To that end, a new paper has just arrived that is going to be a touchstone for technosignature research for some time to come. Clément Vidal (Vrije Universiteit Brussel, Brussels, Belgium) and a large team of co-authors have produced the critical review document needed to consolidate what has been done so far and help newcomers to the work orient themselves with the directions that will be needed next. This is a satisfying event, because it also comes at a time when we are about to get the first graduate-level text on interstellar flight, which should itself inspire future careers. We’ve also just had Jason Wright’s first-rate textbook on SETI, which means that we are preparing the way for interstellar issues to become embedded in college and graduate school curricula. And that’s how we get the next generation of scientists.

Image: Philosopher Clément Vidal’s background in logic and cognitive sciences has led him into new formulations for SETI, as in his 2014 book The Beginning and the End: The Meaning of Life in a Cosmological Perspective. The current paper is an in-depth examination of past and present searches for technosignatures, with suggestions on the path forward. Credit: Clément Vidal.

Both these books are splendid, and I’ll have more on each as soon as the former is publicly released (very soon now). For now, though, let’s dig into the Vidal paper, which acknowledges the new Wright text and its coverage of the theory and practice of technosignature science as well as SETI itself. What Vidal and team set out to do is to create a definitive reference for the kind of technosignatures that have emerged thus far in the field, and the methods we might use to detect them. Those of us who think in terms of distant stars as possible sites for technosignatures may be surprised at the spatial scale strategy here, which actually begins with technosignature searching on Earth, then out to the Moon, the inner Solar System, the Oort Cloud and into interstellar space.

As I’m always interested in archival searches, I want to note the work of Beatriz Villarroel and team on plates from Palomar Observatory from the 1950s. This is of course before the satellite era, so it’s intriguing to find a number of unexplained point sources that disappear from subsequent plates. To guard against artifacts of the photographic emulsion, the team found a statistically significant (22 sigma) deficit of transients in Earth’s shadow. In other words, emulsion flaws are unlikely to be the source of the detected transients. Conceivably they could have been reflective objects that disappeared when entering the shadow. The findings are still being debated in the literature, but they point to the prospect of future searches on even older astronomical plates.

Image: This is Figure 5 of the paper. Caption: Nine simultaneously occurring transients on April 12th 1950, from Villarroel et al. (2021): 10 x 10 arcmin field shown in POSS-1 and POSS-2 red bands. In the POSS-1 image we see a number of objects that cannot be subsequently found, marked with green circles. Purple circles are artifacts during the scanning process. About 9 objects are present in the POSS-I E image (left) from the 12th of April, but not in the POSS-2 image (right) from 1996. One slightly larger circle host two transients. In addition, the 9 objects are neither visible in the blue POSS-1 taken half an hour earlier, nor in a second POSS-1 red image taken six days later on April 18th. The 9 transients are not caused by a difference in depth or spectral sensitivity. The images are based on the DSS digitizations of the Palomar plates.

I won’t go through all the levels the authors mine other than to say that we move from searches for past Earth visitation and current research into Unidentified Aerial Phenomena through the question of ‘lurker’ or Bracewell probes in nearby space and even explore the Solar Gravitational Lens before moving into the kind of exoplanetary and stellar technosignatures that have thus far commanded the most attention in the field. We can’t limit this to our own galaxy because productive work has been done, especially by Wright’s team at Penn State, in examining numerous galaxies for the possible infrared signature of Dyson spheres or other large scale technologies.

Expanding the Already Daunting Search Space

Whereas the original Cocconi and Morrison paper on SETI (1959) assumed a signal deliberately sent in our direction by a species wanting to announce its presence, technosignatures demand no such intent to communicate, and rather than confining ourselves to particular slices of the electromagnetic spectrum, we should consider the possibility of going well past current laser strategies into areas only now coming online. Thus the emergence of low-freqency SETI via the multi-site LOFAR stations in Europe. Or consider the benefits of high-energy photons via X-ray lasers, which can can offer high rates of data transmission. Let me cite the paper on this:

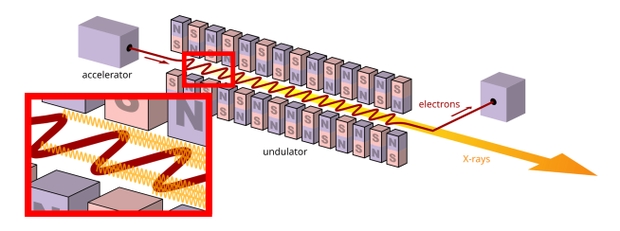

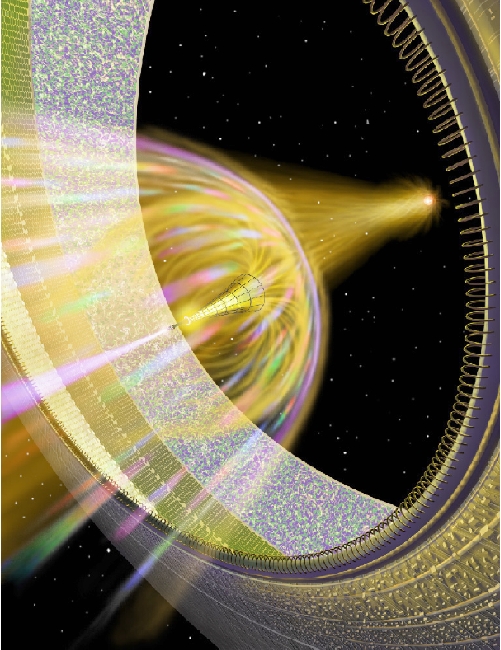

In particular, X-ray lasers are capable of producing highly focused and intense X-ray beams with a very narrow divergence angle which allows for highly energy-efficient interstellar communication. While natural astrophysical sources of X-ray emissions are generally characterized by specific spectral lines, we could search for free electron lasers, which accelerate free electrons to nearly the speed of light, directing them through an alternating magnetic field in a way that produces highly coherent X-ray pulses (see Figure 20). Although above our present technological capabilities, fusion-powered X-ray lasers are another possibility to generate X-ray pulses.

Image: This is Figure 20 from the paper. Caption: Figure 20. A schematic illustration of a X-ray Free Electron Laser (XFEL). An electron gun fires a beam of electrons that are directed through an undulator after being accelerated through a particle accelerator. The beam of electrons then passes through an undulator, which is a periodic arrangement of magnets whose function is to produce the highly coherent X-ray pulses/beam. Diagram courtesy of Wikipedia, based on (Patterson and Abela 2010).

Another advantage: Lower background radiation as compared to radio waves, which make signals in this frequency range easier to detect against natural sources. Finding patterns of X-rays that do not jibe with natural sources would be sufficiently anomalous to catch our attention. X-rays also have advantages over longer wavelengths like radio waves because phenomena like scintillation are far less of a problem. I was interested to see that there have been archival searches through X-ray data. Michael Hippke and Duncan Forgan found in a 2017 paper that 19 candidate signals were present but could most likely be traced to astrophysical causes.

Bear in mind that at our current level of technology, a spectrum via the Chandra X-ray instrument takes five days to build. One suggested path forward is to move toward highly sensitive instruments with the necessary spectral resolution to detect the kind of narrow X-ray emissions such communications would represent. So it’s good to know that beyond Chandra and XMM-Newton we can look toward efforts like the European Space Agency’s Advanced Telescope for High ENergy Astrophysics (Athena), an X-ray telescope armed with X-ray Integral Field Unit (X-IFU) for high-resolution spectroscopy. A NASA flagship mission based on a concept called the Lynx X-ray Observatory made it into the 2020 Decadal Survey but as far as I know is not yet a confirmed mission.

So as we explore problematic options like X-rays (and the paper notes that G-class stars are good candidates here because they do not produce strong X-ray emission lines), we also push into little considered options like gamma rays, perhaps a signature of advanced propulsion. We also find interesting discussion in the paper on expanding the range by looking for communications signals happening within a target exoplanetary system, particularly as we begin to shift our own deep space communications into the laser range. Directed energy systems of the sort we have often considered here would produce a detectable signal, as would planetary radars used for self defense purposes.

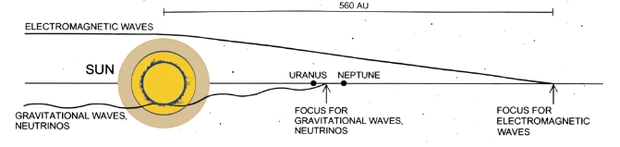

Neutrinos, Gravitational Waves and Other Exotica

And here’s an interesting thought. As far back as Philip Morrison in a 1962 paper, neutrino communication has been suggested for an advanced civilization. Neutrinos react only slightly with matter, meaning that most of the Sun’s outer layers would be transparent to them, with only the dense core layers capable of absorbing them. That means the Solar Gravitational Lens effect for neutrinos starts in the range of 30 AU, roughly the orbit of Neptune. A search for a Bracewell probe is thus possible at a distance much closer than a photon-based signature from a probe at 550 AU.

Image: This is Figure 8 from the paper. Caption: The Solar Gravitational Lens (SGL) is a region where gravitational and neutrino radiation starts to focus (respectively at 22.45 AUs and 29.6 AUs) while the focus of electromagnetic (EM) rays starts from 547 AUs. Human or ETI observational or transmitting probes placed at these regions would benefit orders of magnitude of gains. Figure adapted from (Maccone 2009, xxxi). Credit: Vidal et al.

The authors note that neutrinos have been proposed for communications with submarines as well as interstellar uses. From the paper:

Their extremely low interaction cross-section makes them good candidates for interstellar communication, since they rarely interact with matter: they can travel through interstellar dust, gas clouds, planetary and stellar objects, or even strong magnetic fields surrounding pulsars and neutron stars with negligible attenuation. In other words, neutrino emissions are immune to common interstellar communication issues like dispersion, scattering, absorption, or polarization rotation (problems prevalent with electromagnetic signals). This enables neutrino signals to propagate across interstellar distances while maintaining coherence and fidelity.

Supposing an interstellar civilization wanted to create an aeon-spanning beacon of the sort imagined by some SETI advocates, a neutrino signal would have the advantage of standing out as distinctly structured in whatever modulation scheme chosen. The energy demands of a system like this are unimaginably beyond our own, but searching for technosignatures demands thinking in extravagant terms. With neutrinos the senders free themselves from issues of dispersion and scattering, producing a signal that can reach across the galaxy and remain coherent. I also want to mention Centauri Dreams regular Al Jackson’s take on such a technology in A Neutrino Beam Beacon, based on his 2019 paper. Al has also published with Greg Benford on gravitational wave transmitter concepts. It would be startling to find that the actual galactic conversation was taking place via gravitational wave methods.

My talking about X-rays, gamma rays and neutrinos is just a way of opening the window into the range that this lengthy paper covers. Who knew, for example, how much work had already gone into the theoretical detection of a starship? The various angles into the matter include analyzing motivations for starflight itself, the chief of which must surely be the continuing existence of a species. From the paper:

Survival motivations include avoiding a death threatening supernova or migrating towards a nearby star as the home star fades away (Zuckerman 1985; Hansen and Zuckerman 2021). A pioneering study by Hansen (2022) looked for close stellar encounters in the solar neighborhood. The strategy is then to look for active interstellar migration, where “generation ships” are sent during a close encounter window, hitting this window because it would cost orders of magnitude less time and energy than crossing the otherwise vast interstellar spaces. Hansen proposes this method as a way to constrain search targets because a lot of heat or communication signatures might be associated with such migration.

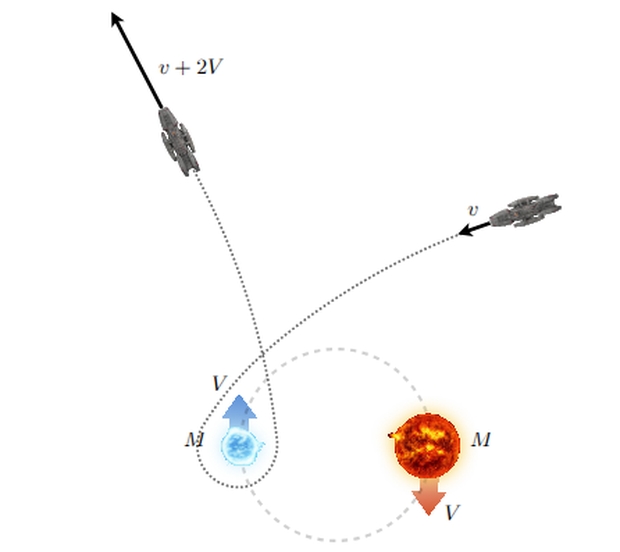

Interesting, to be sure, but look how many starship technosignature ideas spin out of it. Let’s assume two habitable zone planets around the same star, or perhaps in a binary system, so that civilization has expanded to set up technologies on both worlds. Here’s prime fodder for a technosignature search, and indeed TO!-2267 is a recently discovered example. We might then look for both travel signatures as well as communications, a particularly interesting idea when both planets transit.

Image: This is Figure 25 from the paper. Caption: Illustration of a gravitational machine (Dyson 1963) for accelerating spacecraft using binary star orbital energy. Diagram based on Mallove and Matloff (1989, p. 141). Credit: Vidal et al.

Researchers have considered three-body interactions that result in high-velocity ejections, or even waste signatures (‘interstellar contrails’), perhaps to be found in archival data. Robert Zubrin has studied cyclotron radiation caused by the interaction of the interstellar medium with a magnetic sail, while Ulvi Yurtsever and Stephen Wilkinson have worked on the interactions of a relativistic spacecraft with Cosmic Microwave Background (CMB) photons.

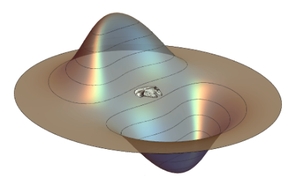

The list could go on, and I haven’t even gotten into Alcubierre-class ‘warp’ drive vessels and the perplexing technosignatures these might produce. Well, this I just have to quote, as temporal matters have their own fascination. Here the authors are discussing what they call a ‘bi-modal signal’ unique to a warp drive craft, for the bubble of spacetime as we observe it is moving faster than the speed of the emissions it is sending out:

This mechanism is a purely craft motion effect, since the craft is moving super-luminally, essentially outrunning the signals it produced earlier in its path. Thus, a distant observatory would record emissions that occurred at two different times simultaneously. One signal would move in the apparent direction of the craft’s motion, showing the emissions occurring in the correct, forward order in time. The second highly unusual signal would move in the opposite apparent direction, presenting the craft’s emissions in a reversed temporal order. This technosignature is considered a key observable (Lentz and Felton 2024) because there is no known natural phenomenon that could produce such a signal.

Image: This is Figure 26 from the paper. Caption: The York-time representation of an Alcubierre spacetime bubble, showing a localized region of warped space with contracted space ahead and expanded space behind. Credit: Vidal et al.

Getting Technosignatures into the Universities

Whether a lightsail, a ramjet, or even a planetary or stellar engine, the interstellar craft has been examined in terms of observational consequences as what we often call ‘Dysonian SETI’ evolves. The unusual waveform of a starship undergoing velocity changes is worth noting as well, again a matter of developing the future tech in the form of sufficiently sensitive gravitational wave detectors. The authors point out that natural objects are also in the mix. Could ejected rogue planets be carrying interstellar colonists, a huge generation ship that might be identified through analysis of its trajectory?

We should have plenty to work with closer to home with the upcoming availability of the Vera Rubin Observatory and the Nancy Grace Roman Telescope looking for objects on hyperbolic trajectories that may be cometary or conceivably technological debris or even active probes. Anomalous occultations in the outer Solar System are obvious targets for existing resources, and radio searches of the Solar Gravitational Lensing region between the Sun and Alpha Centauri have already been conducted. Given the proximity of the outer regions of the Oort Cloud with what may be a comparable region around the Alpha Centauri stars, technosignature searches here seem warranted as well.

I send you to this paper with enthusiasm. Its 118 pages are packed with ideas and as you can see, hardly limited to what we might detect on an exoplanetary surface, although those settings do of course come into play. Given how exciting it has been to witness the birth of direct exoplanet observation since the mid-90s, the extension and consolidation of new ideas for SETI is moving along a similarly fast track, with the obvious and overwhelming exception that it has yet to uncover the kind of observable its practitioners are hoping to find. The massive upgrade in available data that Breakthrough Listen has provided has resulted in no detections. The notion that we have only begun to search is wearing thin. As Jim Benford puts it, “It is too late to say that it is too early to tell.” Clearly, the Fermi question maintains its vitality, and its implications.

The paper is Vidal et al., “The Search for Technosignatures: a Review of Possibilities,” begun as a collective workshop at the Penn State SETI Symposium (PSETI 2023) and now available as a preprint.

Turbulence Between the Stars 22 May 4:54 AM (18 days ago)

We’ve come a long way since the days when interstellar space – and even the environment of our own planetary system – was considered empty. Dust and gas between the stars factor into deep space thinking in many ways given their potential uses and dangers, from hydrogen clouds serving as fuel for a Bussard-style ramjet to the perils of impact with dust grains that can degrade or even penetrate a hull. It’s also clear that a true interstellar map would have to chart such features as the Local Interstellar Cloud, mostly made up of hydrogen and helium, itself inside the ‘bubble’ created by an ancient supernova.

Collecting data on the LIC is enabled by spacecraft like the Interstellar Boundary Explorer and the Voyager probes, the latter of which have long demonstrated the utility of resources outside the heliosphere. Moreover, even as we monitor the LIC, a kind of interstellar turbulence is ahead. Our Solar System nears the LIC’s edge, a crossing that in several thousand years will see us transitioning into the G-Cloud, where changes to the size and shape of the heliosphere due to these boundary crossings could affect the protective screen that shields us. Galactic cosmic rays are threats to biology, elevating cancer risks and damaging DNA. We need the heliosphere’s magnetic bubble.

I’m intrigued by recent work out of the Harvard & Smithsonian Center for Astrophysics, which has found a new way to analyze this turbulence over much longer timeframes and distances. The notion here is that ionized gas and electrons throughout the galaxy can be detected by analyzing the radio signature of distant objects as it passes through this material. What is new here is the insight into the structure of the turbulence as it scatters light.

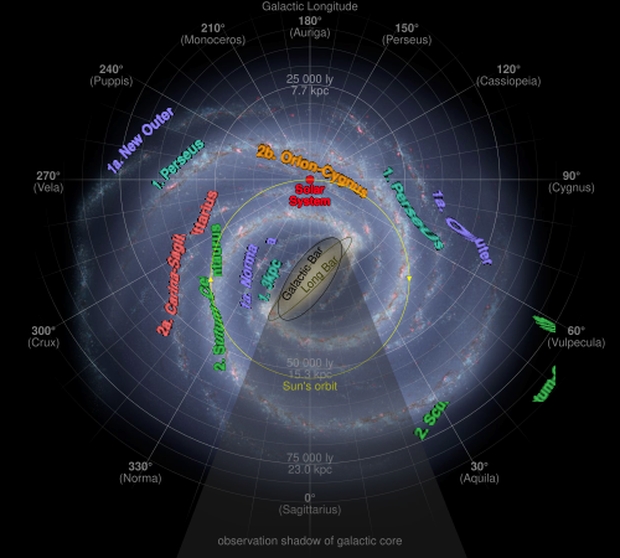

To make this analysis happen, the authors of the new paper in The Astrophysical Journal Letters have been examining a decade of archival observations from the Very Long Baseline Array (NSF VLBA), which cover the findings of ten radio telescopes located across the United States. The quasar TXS 2005+403, perhaps 10 billion light years away in Cygnus, provides the bright radio source whose wavefront moves through a region considered one of the most turbulent and strongly scattering regions of the galaxy.

Image: Artist’s conception of the Milky Way galaxy as seen from far Galactic North (in Coma Berenices) annotated with arms as well as distances from the Solar System and galactic longitude with corresponding constellation. Note the Sun’s galactic orbit in the image. Credit: NASA/JPL-Caltech/ESO/R. Hurt derivative work: Cmglee/Wikimedia Commons.

What is particularly useful here is that our viewpoint from Earth takes in the length of the Orion-Cygnus spiral arm, which means we have the benefit of looking through one layer of interstellar material after another. The density of the gas and dust here is the key. Interactions with galactic cosmic rays make the region bright in gamma-ray radiation, but the area also is useful for studying the compression of these clouds as new star generations are born. The Cygnus Molecular Nebular Complex is one of the largest star-forming areas in the Milky Way, containing numerous clusters and stellar associations.

The persistent patterns found in the VLBA data as analyzed in this paper show the kind of distortions that mark interstellar turbulence. Lead author Alexander Plavin (CfA) explains how the quasar’s light makes the case:

“Most of what we see in the radio data isn’t coming from the quasar itself, it’s coming from the scattering caused by the turbulence in this region of the Milky Way. That scattering and the distortions that come with it are what allows us to study the turbulence and better understand and infer its structure. The most distant pairs of telescopes should not have seen the quasar image, but to our surprise, they clearly detected its signal, or faint glow. It can’t be explained by simple blurring or by the quasar itself, and it behaves the way turbulence is expected to, which is how we know we’re seeing the effects of interstellar turbulence.”

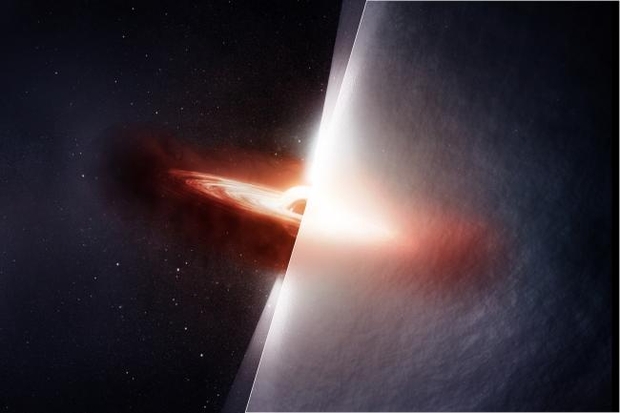

Image: Radio light from quasar TXS 2005+403 travels roughly 10 billion light-years to reach Earth, traversing the Cygnus region, one of the most turbulent and scattering environments in the Milky Way Galaxy. On the left, this artist’s conception shows the quasar as it truly appears, with a bright accretion disk and jets blasting into the galaxy like a beacon through the darkness. On the right, we see how turbulent gas distorts scientists’ view of the quasar in much the same way heat haze from a fire warps our view of the objects behind it. In a new study led by astronomers from the Center for Astrophysics | Harvard & Smithsonian (CfA), scientists have for the first time directly detected how interstellar turbulence distorts light from a distant quasar, revealing the structure of that turbulence. Credit: Melissa Weiss/CfA.

Thus the quasar TXS 2005+403 proves to be a helpful indicator, refining our understanding of the interstellar medium. From the paper (the italics are mine):

The source combines several crucial properties: (i) high flux density (∼2 Jy), enabling detections with routine VLBI; (ii) compact intrinsic structure on milliarcsecond and submilliarcsecond scales, necessary for scattering to dominate the observed morphology; (iii) structural stability on timescales of months, unlike Sagittarius A*, where intrinsic variability complicates interpretation; and (iv) strong scattering due to its location behind the turbulent Cygnus region. This detection suggests that similar AGNs in other strongly scattering regions could be identified…enabling systematic studies of Galactic turbulence and magnetic field structure across the sky. Improved understanding of scattering properties from sources like TXS 2005+403 would directly inform efforts to mitigate scattering artifacts in Event Horizon Telescope images of the black hole in the center of the Milky Way, where scattering limits image fidelity, and would help interpret propagation effects in fast radio bursts.

And what of the gas and dust our own system continues to pass through? I have my eye on a paper in Physical Review Letters that meshes nicely with the CfA work. Here, an international team coordinated its efforts through the Helmholtz-Zentrum Dresden-Rossendorf (HZDR). This German research organization maintains the DREsden Accelerator Mass Spectrometry (DREAMS) package, which allows scientists to work with radioactive isotopes that result from our Solar System interacting with the interstellar medium. Their latest work studies Antarctic ice and deep sea sediments in a range of 40,000 to 80,000 years ago in search of iron-60, which is produced in core-collapse supernova events. This radioactive isotope is a kind of smoking gun for such explosions.

The concentration of stardust graphed over time in the different layers of ice cores offers a timeline that allows us to understand our planet’s journey through different parts of the Local Interstellar Cloud. The Sun moved into the LIC several tens of thousands of years ago, and will exit it in a few thousand more. The paper makes the case that stellar debris from supernovae can persist over long timeframes within the cloud. Less iron-60 reached the Earth 40,000 to 80,000 years ago than reaches it today. As Dominik Koll (HZDR) says, “This suggests that we were previously in a medium with lower iron-60 content, or that the cloud itself exhibits strong density variations.”

The authors consider this evidence for the LIC as what they call a ‘cosmic archive’ for the iron-60 produced in supernovae explosions. Its varying levels show a changing interstellar environment over the last 80,000 years. Koll adds:

“Our idea was that the Local Interstellar Cloud contains iron-60 and can store it over long time periods. As the Solar System moves through the cloud, Earth could collect this material. However, we couldn’t prove this at the time. This means that the clouds surrounding the Solar System are linked to a stellar explosion. And for the first time, this gives us the opportunity to investigate the origin of these clouds.”

To perform their analysis, the team used ice cores from the European ice drilling project EPICA (European Project for Ice Coring in Antarctica). They moved 300 kilograms of ice to the Dresden laboratory for processing, checking their sample against the radioisotopes beryllium-10 and aluminium-26, whose abundances in the ice are well known. The Heavy Ion Accelerator Facility (HIAF) at Australian National University was then used to separate out the iron-60 atoms to detect the signature of supernovae that occurred millions of years ago.

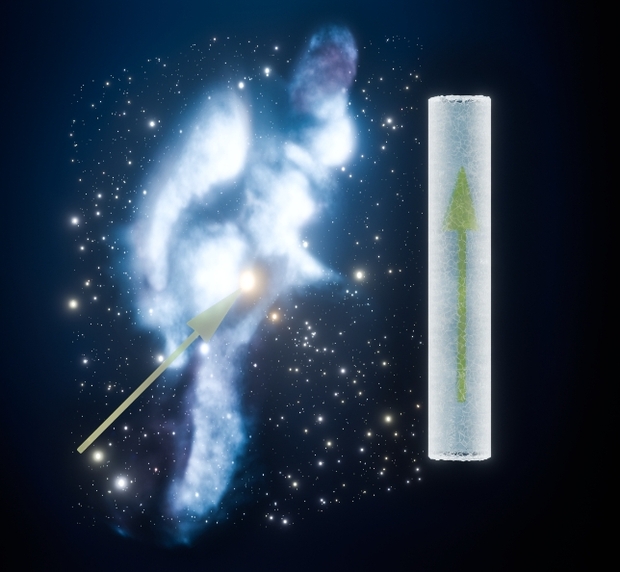

Image: Path of the solar system through the Local Interstellar Cloud. The cloud’s profile is preserved as an interstellar fingerprint in Antarctic ice. Credit: B. Schröder/HZDR/ NASA/Goddard/Adler/U.Chicago/Wesleyan.

The timescales here are striking, giving some idea of the capability of interstellar clouds to affect the stellar systems that move within them. Analyzing ice cores dating from before the Sun’s entry into the Local Interstellar Cloud is an objective for the team’s next round of measurements.

Science fiction buffs will likely recall Stephen Baxter’s writing on the matter of interstellar dust, especially in the novel Manifold: Space (Voyager, 2000). Here, dust and radiation waves kicked up by high-energy astrophysical events act to disrupt biology, a kind of galactic ‘reset’ that goes a long way toward explaining why interstellar civilizations have never been observed. The answer to the Fermi question in this novel is a non-malevolent but devastating natural phenomenon. Each of Baxter’s three Manifold novels, incidentally, offer different takes on the Fermi question, which continues to drive its own wavefront of SF plot ideas.

The paper on interstellar turbulence is Plavin et al., “Direct Very Long Baseline Interferometry Detection of Interstellar Turbulence Imprint on a Quasar: TXS 2005+403,” Astrophysical Journal Letters Vol. 1003 No. 1 (13 May 2026), L4 (full text). The paper on dust and cloud structure is D. Koll et al., “Local Interstellar Cloud Structure Imprinted in Antarctic Ice by Supernova 60Fe,” Physical Review Letters 136 (12 May 2026), 192701 (full text).

Przybylski’s Star: Still Bizarre After All These Years 15 May 5:29 AM (25 days ago)

While I’ve been going through early extraterrestrial ideas like those of Ronald Bracewell I’ve run back into that most unpronounceable of stellar objects, Przybylski’s Star. This one is worth a return look and I was reminded of it by author and futurist John Michael Godier on his Event Horizon podcast. I do few interviews but I’ve always admired John and Ross’s work on Event Horizon so much that I made an appearance last week. It was John who summoned up Przybylski’s Star as we moved into the broader topic of technosignatures.

Image: Antoni Przybylski in the early 1960’s. Credit: Mike Bessell (via Charles Cowley’s site).

David Kipping does a gallant job of pronouncing Przybylski in one of his Cool Worlds videos, and Wikipedia recommends pʂɨˈbɨlskʲi, which is itself a challenge. Try jebilskee, which is what University of Michigan astronomer Charles Cowley heard when he asked Przybylski himself how to say his name way back in 1964 (the reference is now offline, as Cowley unfortunately passed away in 2024). In any case, suppress the initial ‘p.’ I can’t resist reprinting an old pre-X Twitter post on the matter:

PRZYBYLSKI'S STAR (HD 101065) Blue dwarf with a peculiar spectrum showing an almost complete absence of vowels.

— FSVO (@FSVO) November 22, 2012

This star is more than a curiosity. As a matter of fact, if I were to declare the one most intriguing object in the technosignature hunt, it’s this one, although I’ll hasten to add that we’d need a lot more evidence before making that call. Przybylski’s Star is roughly 350 light years out in Centaurus, discovered in 1873 but gaining attention in 1961 when the Polish astronomer Antoni Przybylski examined its spectrum to discover that it didn’t fit our normal stellar classification scheme. I’ve seen it pegged as an F3-class star but also as an F0p, with the p standing for peculiar. If we go by effective temperature, we come up with early F-class, but its spectrum separates it from all else in that category.

It’s also referred to as an Ap star (this is Kipping’s preference), and whereas F0p is a designation based on temperature, Ap refers to stars larger and hotter than the Sun and possessed of intense magnetic fields and slow rotation rates. What to make of the star’s spectrum? It’s laced with oddball elements like europium, gadolinium, terbium and holmium. Moreover, while iron and nickel appear in low abundances, the stellar atmosphere shows the presence of short-lived ultra-heavy elements like actinium, plutonium, americium and einsteinium.

The latter were identified in 2008. Called actinides, these are elements with atomic numbers from 89 to 103 on the periodic table. They force the question of how radioactive elements with half-lives on the order of centuries or even decades could be there. How are these reactions being sustained on the surface of a star? The reference here is an important if strangely obscure one. The work of a Ukrainian team under V. F. Gopka, the paper is “Identification of absorption lines of short half-life actinides in the spectrum of Przybylski’s Star (HD 101065)” (citation below). David Kipping (on an earlier Event Horizon podcast) and Jason Wright (Penn State) have both mused on the lack of follow-up to it, even though the work seems solid and has implications in terms of technosignature searches.

We also have a 2017 paper by Vladimir Dzuba (University of New South Wales) that offers an interesting solution. The idea is that the short-lived actinides in Przybylski’s Star are the result of undiscovered superheavy elements (a theoretical ‘island of stability’ on the periodic table) which can survive for millions of years. In this model, it is the decay of these elements that produce lighter ‘daughter’ products that are found here, including such things as plutonium and uranium. A possible origin for such superheavy elements is a nearby supernova explosion whose shockwave would have fed this matter directly into the forming star.

Image: Przybylski’s star, image center. By Vizzualizer – Own work, CC BY-SA 4.0,

What we’ve seen in intervening years is discussion of whether the spectral data have simply been misinterpreted, or whether a nearby neutron star might be bombarding the atmosphere of Przybylski’s Star, but there is no observational evidence for such a companion. The island of stability idea has yet to be confirmed in the laboratory, although this work continues. I’ll also mention the star HD 25354, another ‘peculiar’ star, this one in Perseus, which is now being investigated. It contains unstable radioactive elements in its upper atmosphere.

So we have an ongoing mystery, one with tantalizing reminders of a 1980 paper from Daniel Whitmire and David Wright called “Nuclear waste spectrum as evidence of technological extraterrestrial civilizations.” Here the concept is using a star as a repository for radioactive waste. The authors homed in on A stars as being likely candidates. I suspect they were thinking about Sagan and Shklovskii in their book Intelligent Life in the Universe (Delta, 1968), where the authors speculate on the possibility of ‘salting’ a star to call attention to it, a kind of interstellar beacon. Look, a civilization is saying, there is intelligence near this star.

I mention Sagan and Shklovskii pointedly because while both are frequently fused into a single entity in later descriptions of their era, the duo had profound disagreements on a lot of things, especially the kind of SETI embodied in the Drake Equation. On the matter of ‘salting’ a star, it’s Sagan who references Shklovskii as well as a separate Drake paper on the concept, not claiming it for himself. From the book:

Drake and Shklovskii envision the dumping of a short-lived isotope – one which would not be ordinarily expected in the local stellar spectrum – into the atmosphere of the star. In any case, the material of the marker should be of a type that is difficult to explain, except as a result of intelligent activity…. Remarkably enough, the spectral lines of one short-lived isotope, technetium, have in fact been found in stellar spectra… This example illustrates one of the difficulties with such a marker announcement of the presence of a technical civilization. We must know a great deal more than we do about both normal and peculiar stellar spectra before we can reasonably conclude that the presence of an unusual atom in a stellar spectrum is a sign of extraterrestrial intelligence.

So could the spectrum of Przybylski’s Star actually be a technosignature? You can see how difficult this problem is considering the rarity of this kind of star. Even so, a look at The Catalog of Ap, HgMn, and Am Stars reveals over 8,000 ‘peculiar’ stars, ranging across the temperature scale. I dug into the catalog with AI to learn that Ap and hotter Bp stars are the largest subgrouping, both characterized by unusually strong magnetic fields. There is much work here for aspiring graduate students because we need to learn whether the actinides at Przybylski’s Star are simply a rare natural phenomena or a technical marker.

Przybylski’s original paper on the star is “HD 101065-a G0 Star with High Metal Content,,” Nature Vol. 189, Issue 4755 (1961) 739 (abstract). Jason Wright’s three-part essay on Przybylski’s Star is well worth your time. The paper identifying actinides in this star is Gopka et al., “Identification of absorption lines of short half-life actinides in the spectrum of Przybylski’s star (HD 101065),” Kinematics and Physics of Celestial Bodies Vol 24, Issue 2 (April 2008) 89-98 (abstract). The Whitmire and Wright paper is “Nuclear waste spectrum as evidence of technological extraterrestrial civilizations,” Icarus Vol. 42, Issue 1 (April 1980), 149-156 (abstract). Vladimir Dzuba’s paper is “Isotope Shift and Search for Metastable Superheavy Elements in Astrophysical Data,” Physical Review A 95 (30 June 2017), 062515 (abstract).

A Deeper Dive into the Proxima Centauri Swarm 9 May 5:36 AM (last month)

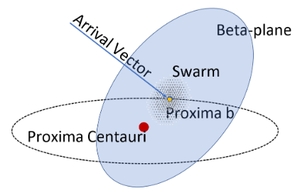

I’m always interested in how work on interstellar concepts gets funded. After all, although the Nancy Grace Roman telescope is now ready to fly, with a launch some time this fall, there was a real chance the project might get canceled along the way. Trying to predict what will happen to NASA’s budget is harder now than ever. Thus I followed Marshall Eubanks and team’s work on swarm technology missions to Proxima Centauri with interest, learning in their new paper that their NIAC funding continues along with a grant from Breakthrough Starshot’s Communications Group. That last is itself interesting, as communications was, I’ve been told, the toughest nut to crack in setting up swarm strategies for tiny sailcraft – a few grams each – for Proxima Centauri b. Some of this work was performed at the Jet Propulsion Laboratory as well.

Imagine our swarm as consisting of 1000 lightsails launched in a one-month window, boosted by the kind of laser array Breakthrough Starshot has advocated, an Earth-based installation high in the Chilean desert. The research team refers to these sailcraft as ‘coracles,’ a nod to a traditional bowl-shaped boat common to the northern British isles and Ireland. Reaching a velocity of 20 percent of lightspeed, the probes are to be assembled into a coherent swarm using drag from the Interstellar Medium (ISM). At these velocities, this flow of neutral and charged particles can shape them into coherency on the order of 100,000 kilometers transverse separation; i.e., perpendicular to the path of the swarm.

Image: Figure 3 from the paper. Caption: The beta-plane of a swarm flyby of Proxima Centauri b, with the swarm shown lying in that plane. (Note that the planned swarm dispersion is much smaller than is indicated in this artist’s impression, and that in practice the swarm will not be exactly centered on Proxima b’s position due to ephemeris errors.)

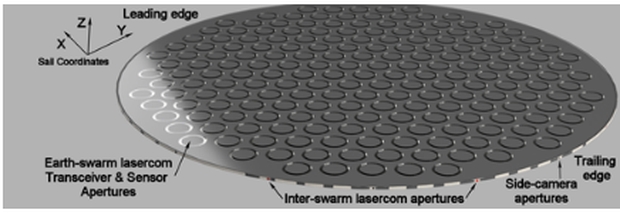

The individual probe is currently envisioned at 4 meters in diameter, and on the order of 10 mm thick (aerographene is a leading material candidate). The total probe mass is 3.6 grams, 2.6 of which is allocated to the laser sail. Instrumentation is placed directly on one side of the sail, a phase-coherent array of metamaterial flat optics. As shown below, it contains spaces for 169 smaller 200-mm annular apertures, although not all of these are necessarily used depending on the profile of the mission being flown. These optical apertures when combined produce the light collecting area for a single coracle approximating a 0.5 m telescope.

Image: This is Figure 4 from the paper. Caption: Oblique view of the top/forward of a probe (side facing away from the launch laser) depicting an array of phase coherent apertures for both imaging and for sending data back to Earth. Credit: Eubanks et al.

An earlier Centauri Dreams article Reaching Proxima b: The Beauty of the Swarm gives background particulars, but the concept is now being brought forward with a great deal more detail. Swarm concepts are useful because the high number of probes heightens the chances that some of the probes may move past both sides of the target for maximum coverage. It’s noteworthy that the authors, taking into account launch as well as voyage and encounter losses, assume only 300 of the original 1000 will be left for communications back to Earth. As we’ll see, some of the probes are to be ‘sacrificed’ as they serve the communications needs of the mission.

Working with the Medium

But let’s get back to the question of the interstellar medium. Each of the probes is to rotate 90 degrees at the end of the boost phase, the idea being to reduce erosion during the cruise phase by traveling edge-on. We have to get through the comparatively dense interplanetary zone before exiting into interstellar space – here it’s interesting that given the direction of Proxima Centauri from the ‘nose’ of the heliosphere, the movement through the heliopause should occur at roughly the same distances experienced by the Voyagers – 125.6 and 119 AU. We’re moving, of course, considerably faster, and at .20 c, exit the Solar System in less than four days. It took Voyager 2 41 years to make this passage. From the paper:

It is not possible to increase speeds with drag from the ISM wind caused by the probe’s velocity; we use ISM drag to implement a velocity on target technique, slowing down the later launched probes so that velocities come to match as probes approach each other. Once the solar system risk zone is passed this technique will be initiated by rotating swarm members into a “face-down” sail-side up configuration, increasing the drag by having the sail-side face into the ISM wind. In the face-down configuration, the main communications lasers on the instrumentation side will be facing the Earth, enabling high-bit-rate communications without exposing the instrumentation and electronics to ISM wind damage. Note that the first probe launched will not have to enter a facedown configuration, and the later launched probes will advance to join it.

So we have differential thrust between edge-on probes and face-on probes, the result being a swarm that is gradually assembled over 2.79 years. Remember that the plan is to boost the entire swarm into space in a period of no more than one month. The swarm begins to coalesce after launch because the launch velocity of each new probe is increased, allowing later-launched probes to catch up with earlier ones. The probes all return to an edge-on configuration after swarm assembly, coordinating communications through six lasers per probe.

Image: Leaving the heliosphere, we move into the interstellar medium’s gas, plasma, dust, cosmic rays, and magnetic fields. Can we use this ‘interstellar wind’ to shape the Proxima Centauri swarm? Credit: JHU/APL.

I mentioned above the attrition of the swarm along the route, which is not entirely due to encounters with material in the ISM. The authors also turn individual probes into a face-down configuration to manage data communications with Earth, creating higher drag that pulls them out of the swarm. Meanwhile, the 30 percent of the swarm thought to be remaining at the Proxima Centauri system can target Proxima Centauri b or break into sub-swarms, perhaps targeting other planets in the system. One week before the encounter, the first probes will rotate their instrument side into the forward direction of motion, relaying observations to the rest of the swarm. The entire swarm will go face-down after the encounter for relaying data to Earth. The data return phase is assumed to require no less than a year.

Bringing the Data Home

The communications problem vexed Breakthrough Starshot designers, so the solution posed here catches the eye. Among the options are having probes return data independently or, far better, creating a time-coherent swarm which sends communications pulses that arrive at Earth simultaneously. More challenging but perhaps the most worthy of future study is to create a sparse phased array for communication, one that allows swarm antennas to act as a single higher-gain antenna. The thin, ultra-lightweight optical elements are phase-locked to achieve a synthetic aperture of considerable size, but one that demands maintaining probe positions at the nanometer level. From the paper:

The advantage of this latter approach is that the synthesized beam pattern in the main lobe at Earth is equivalent to that of the single transmission reflector with area equal to the sum of the areas of all the probes, although this would be a sparse array and the beam shape would not be the same as the beam formed by a solid antenna with the same extent. Note that this approach would require maintaining the positions of the probe members at the few 100 nm level or better, roughly 6 orders of magnitude better than the time coherent swarm approach. We do not consider this last sparse phased array approach further in this paper due to the extreme difficulty of phase coordination across the swarm.

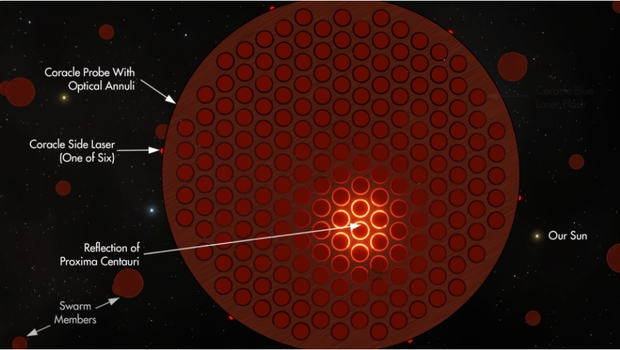

Image: This is Figure 2 from the paper. Caption: Artist’s impression of a Coracle approaching Proxima b (and reflecting the light of Proxima Centauri). The 12,000nm intra-swarm “Side Lasers” (see Subsection 6.3) are for intra-swarm probe-to-probe communications. Each round ring on the top (instrumentation) side of the sail visible here is the 200 mm annulus aperture of a folded optic camera (see Figure 6 and discussion) shared between imaging and communications with Earth at 432/539-nm. Conceptual artwork by Mark Garlick. (Note: Seeing other probes apparently nearby at encounter is artistic license!)

Data broker ‘agents’ can be used to filter and select data from the many terabytes collected during the flyby, managing the data return to Earth. In this way redundant data can be filtered out of the data flood, using what the authors call Observe-Evaluate-Select-Flood (OESF) loops, in which the swarm is essentially divided into nested sets of probes. This part of the concept deserves more attention than I can give it here, but it’s essentially applying an AI approach not only to managing collected data but also to analyzing imagery for further consideration. Even so, this statement pulled me up short:

Although the techniques of developing swarm coherence and agent-based data selection certainly require work, there seems to be no fundamental limitation to the return of gigabytes of data over interstellar distances with large swarms of laser-sail spacecraft.

I believe the statement is true insofar as we can come up with a solution consonant with physics to make this happen, but gigabytes of data with this particular mission concept seems too much to hope for. That’s the judgment of a layman, however, and it will be fascinating to see how these communications concepts play out in the literature as this project continues to be refined. The concepts here are ingenious, even startling, and deserve further investigation.

Moving into the Proxima Centauri System

The prospect of instrumentation in the Proxima Centauri system is exciting indeed. Given the number of probes entering this zone, the authors believe at least one is likely to pass within a single diameter of Proxima b, which would provide spectroscopic analysis of the planet’s atmosphere as well as imaging in considerable detail. Mapping of the surface on the day side of the planet would allow us to search for the so-called ‘vegetation red edge’ and any biology there. The search for biosignatures and technosignatures could get down to the level of features like coral reefs or even night-time city lights.

High-velocity flybys pose huge imaging challenges, given the needed length of exposure time and the movement of the planet in the field of view. The result: enormous image smear. To attack the problem, the authors point to Time Delay Integration (TDI), Velocity Shift Integration (VSI) and high dynamic range imaging (HDR), three techniques explained in the paper. The close flyby of Proxima b itself will last less than a minute. Note the ramifications of this not only on data return but the necessary computational resources of the swarm:

In 0.01 s the spacecraft would move ∼600 km, which, at a distance of 10,000 km… would cause noticeable distortions of the images being stacked; these are predictable and can be removed. Iterative HDR can remove rotations of the spacecraft during the image, correct for ephemeris errors during imaging, and also correct smearing due to objects with different relative velocities in the image plane. In a 10 second flyby with 106 mega-pixel images per second per aperture a single probe with multiple aperture arrays might obtain billions of images, mostly greatly underexposed. This will form the raw material for searches for small bodies and unanticipated features in the Proxima system. It will never be possible to send all of this raw material back to Earth; extracting as much useful information as possible from it after the encounter will be a major computational task for the probes in the swarm.

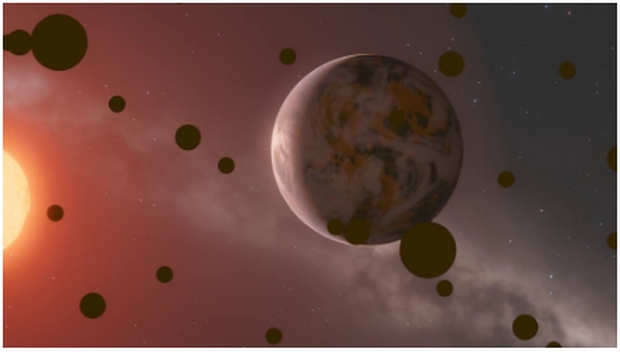

Image: This is Figure 1 from the paper. Caption: Artist’s impression of the approach of a swarm towards Proxima b; at this point, a few seconds before closest approach, the swarm could be examining the planet’s nightside for techno- or bioluminescence. (This image is based on the artistic work of Dr. Mark A. Garlick.)

Orienting the probes after the system flythrough to communicate with Earth, the swarm will be able to observe the Proxima system as it recedes and observe the interactions of the star’s heliosphere with its local interstellar medium (and recall the New Horizons imagery of Pluto after that spacecraft’s encounter). Moreover, a distant encounter with Proxima A and B will occur about a year after the Proxima Centauri event, although the approach as conceived here would be on the order of 10,000 AU. Planets in the habitable zone of both stars should be observable from this distance. Much better, of course, to have a separate Centauri AB flyby mission, but for now one system at a time.

Navigation will be difficult given that we need highly accurate ephemeris information – in other words, we have to know exactly where Proxima Centauri b is, an obvious point, but it’s problematic because given current data from Gaia, the possible error in the star’s proper motion amounts to a 260,000 kilometer error over the mission’s flight time. A better determination of Proxima b’s orbit is also critical, which is why the authors consider a possible precursor mission several years before the first swarm mission to improve the ephemeris.

I won’t list all the authors of this paper but many will be familiar to Centauri Dreams readers, including Jean Schneider and Pierre Kervella (Paris Observatory), Andreas Hein (I4IS/University of Luxembourg), Robert Kennedy (I4IS), Slava Turyshev (JPL) and Philip Lubin (UC-Santa Barbara). The kind of investigation mounted by this team is how we move the ball forward in interstellar studies. Drawing on recent work including the deep investigations of the Breakthrough Starshot scientists, Eubanks and colleagues have enlarged the speculative space especially in terms of communications and swarm computational options, all making an interstellar crossing in decades rather than centuries possible. This paper should be studied by anyone seriously following our increasingly refined strategies for making such a crossing happen.

The paper is Eubanks et al., “Science from the In Situ Exploration of the Proxima Centauri System,” available as a preprint.

Moving a Civilization: The Caplan Thruster 30 Apr 8:57 AM (last month)

Because I’ve been talking about enormous structures lately and describing them as ‘big dumb objects,’ I thought it would be fun to revisit the origin of that term. BDOs emerged in what was intended as an April Fool’s joke by writer and critic Peter Nicholls, famed as editor (with John Clute) of The Encyclopedia of Science Fiction, now online in its fourth edition. He describes the genesis of the term in a well known essay called “Big Dumb Objects and Cosmic Enigmas: The Love Affair between Space Fiction and the Transcendental”:

“All these matters were in the forefront of my mind when I came to revise The Encyclopedia of Science Fiction, a task in which my primary responsibility was to rewrite and rethink all those entries dealing with the themes of science fiction. This brings us to April Fool’s Day, 1992, that being a day in which practical jokes are traditionally carried out. On that day I was exhausted writing theme entries, and my brain was hurting. So I decided to write an April Fool’s entry. I would pretend that a phrase that I’d always liked, originated by the critic Roz Kaveney but not in general use, was actually a known critical term. I would write an entry called “Big Dumb Objects” in a poker-faced style, suggesting an even more absurd critical term to be used in its place, “megalotropic sf…”

Image: The cover of the first edition of Greg Bear’s novel Eon (1985), which describes a huge, terraformed asteroid that enters the Solar System. This is one of the Big Dumb Object novels Nicholls discusses in his formative essay.

Nicholls soon realized that vast structures were symptomatic of what makes the best science fiction operate, and he relates them to the “…tension between the writer’s respect for and understanding of orderly scientific thought (the classical) and his love for the phenomena which do not submit to this order (the romantic).” If that seems a stretch, read the essay, where he points out that ‘hard’ science fiction, with its adherence to the laws of physics, can inspire in its ringworlds and Dyson spheres and Ramas a deep Dionysian mystery, a sense of the sublime that we can easily relate to the familiar ‘sense of wonder.’ I like Nicholls’ reference to the rituals of Dyonisus, with their ecstaties and trances.

In our talk about stability and BDOs, we home in on the practical matter of whether or not they could actually be built, but again, the laws of physics imply this is an engineering problem that an advanced civilization could well master. It could be, of course, that a Big ‘Dumb’ Object isn’t really so dumb if it needs a constant technological assist to survive, but Colin McInnes, whose essay on stellar engines we examined last week, has also produced a paper covering ringworlds and Dyson spheres that finds modes of stability even there. I’ll give that citation below, and thank Dr. McInnes for his kind note with the reference. So maybe we need another term: ‘Big Smart Objects’?

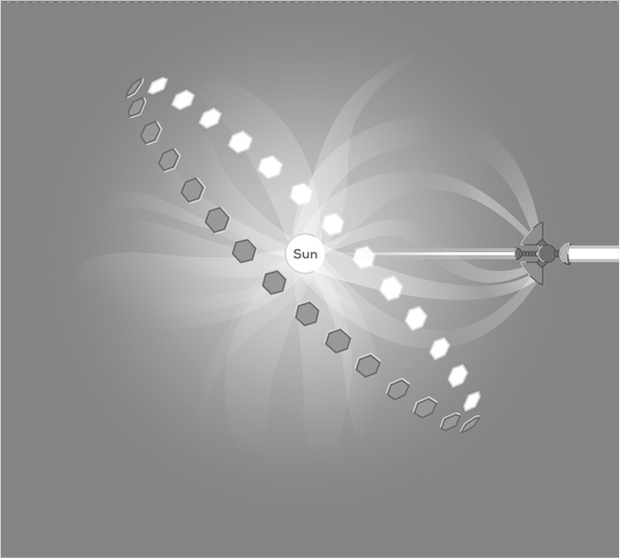

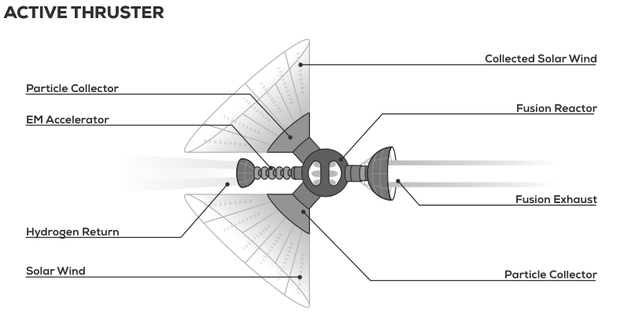

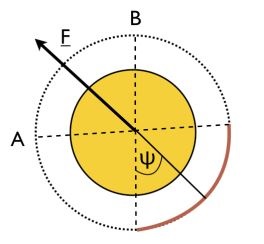

For today, let’s segue to a relatively new entry in the stellar engine portfolio, as developed by Illinois State University’s Matthew Caplan. Unlike a Dyson sphere or swarm, a stellar engine produces a change in its star’s position, small enough that a planetary system is not disrupted, but large enough that over millions of years, the star’s galactic orbit can be modified. Speculating about what alien civilizations might do takes us deep into the weeds of philosophy and epistemology, an exercise best left for future posts. But let’s take one possibility that seems rational, escaping from one or more nearby supernovae. Thus Caplan:

…ozone depletion in the earth’s atmosphere due [to] ultraviolet radiation from a supernova within 10–100 pc may result in a mass extinction event. Amusingly, mounting evidence for one or several nearby supernova (100 pc) approximately 2 million years ago now forms the basis for recent suggestions that nearby supernova caused climatic shifts which directly influenced human evolution. The effect of a supernova on an exoplanetary biosphere inhabited by an advanced civilization will depend on that planet’s atmospheric composition and biosphere, and may be very different from earth, possibly extending the danger zone of supernova by an order of magnitude relative to earth. A catastrophe such as a supernova could likely be predicted millions of years in advance, at minimum, for an advanced civilization with detailed understanding of star formation and the supernova mechanism.