Street art in Palma de Mallorca (March 2025) 30 Mar 11:15 AM (4 days ago)

Once upon a time I made a tour through Berlin with the focus on non-popular, but interesting and special places, that are more connected to the life and subcultures in Berlin. A big part of that included street art, which fascinates me until today. All the different ways of using creativity on walls and buildings and anything in the urban space also displays society and also struggles within it.

An amazing spot for a buzzing street art scene is (was at least) Belgrade, and I blogged about my experience in Budapest. Now I had the opportunity to range through Palma de Mallorca. Without having preparation, in just a short walk I spotted so many interesting pieces just in the old town.

Unfortunately I do not have much context about any but one of them. Still they tell a story so please enjoy the artworks I found.

But let me start with the one I have context about. Miguel Gila was a Spanish comedian and actor, amongst others well know for his role in May I Speak with the Enemy?. He was shaped by the Spanish Civil War and captivity in concentration camps and prisons.

In this piece as well as in some others you notice that the characters are wearing a mask. I do not know whether this is the original creation or whether they were added later on – especially the strokes on top. The symbolic in this piece is saddening, as he was an individual who had to say a lot. Context was provided by Daniel – thank you!

And without further ado, my other takes:

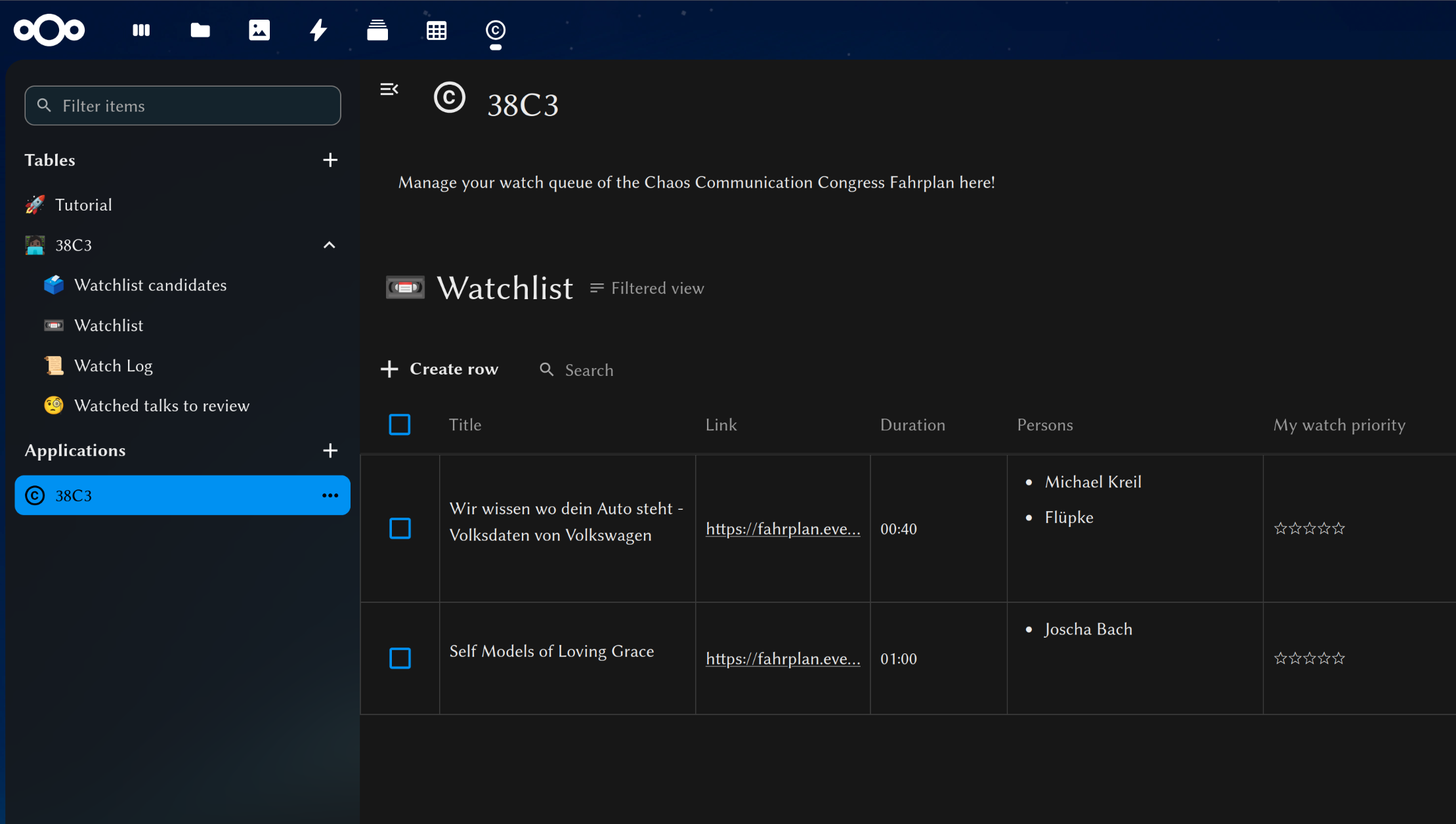

Managing the Congress watch list with Nextcloud Tables 29 Dec 2024 2:08 PM (3 months ago)

Being at the Congress or not (like me), there is a wealth of great talks and presentations happening, and in a number that is hard to remember. There is a need to manage something.

This year I try something new. Upload the Fahrplan into Nextcloud Tables, and have a view process related views for specific information and a good overview. Importing the schedule and keeping it in sync requires a custom script that then makes use of the Tables API.

And in this regard, creating the structure around the Table, the View, and adding as a Tables Application can be done while doing so as well.

Every follow-up run of the script will add, remove or update talks if a new Fahrplan version is available.

Now I have a process where I can evaluate talks to watch, then have the overview in a watch list and can post-process them (adding rating/annotation now or later) and have the log of watched talks as well.

The script fahrplan2tables is available on codeberg. If you like to use it, check the requirements in the Readme (currently is is needed to patch tables to avoid a bug that is fixed, yet unreleased).

What's left is watching the recordings now ;)

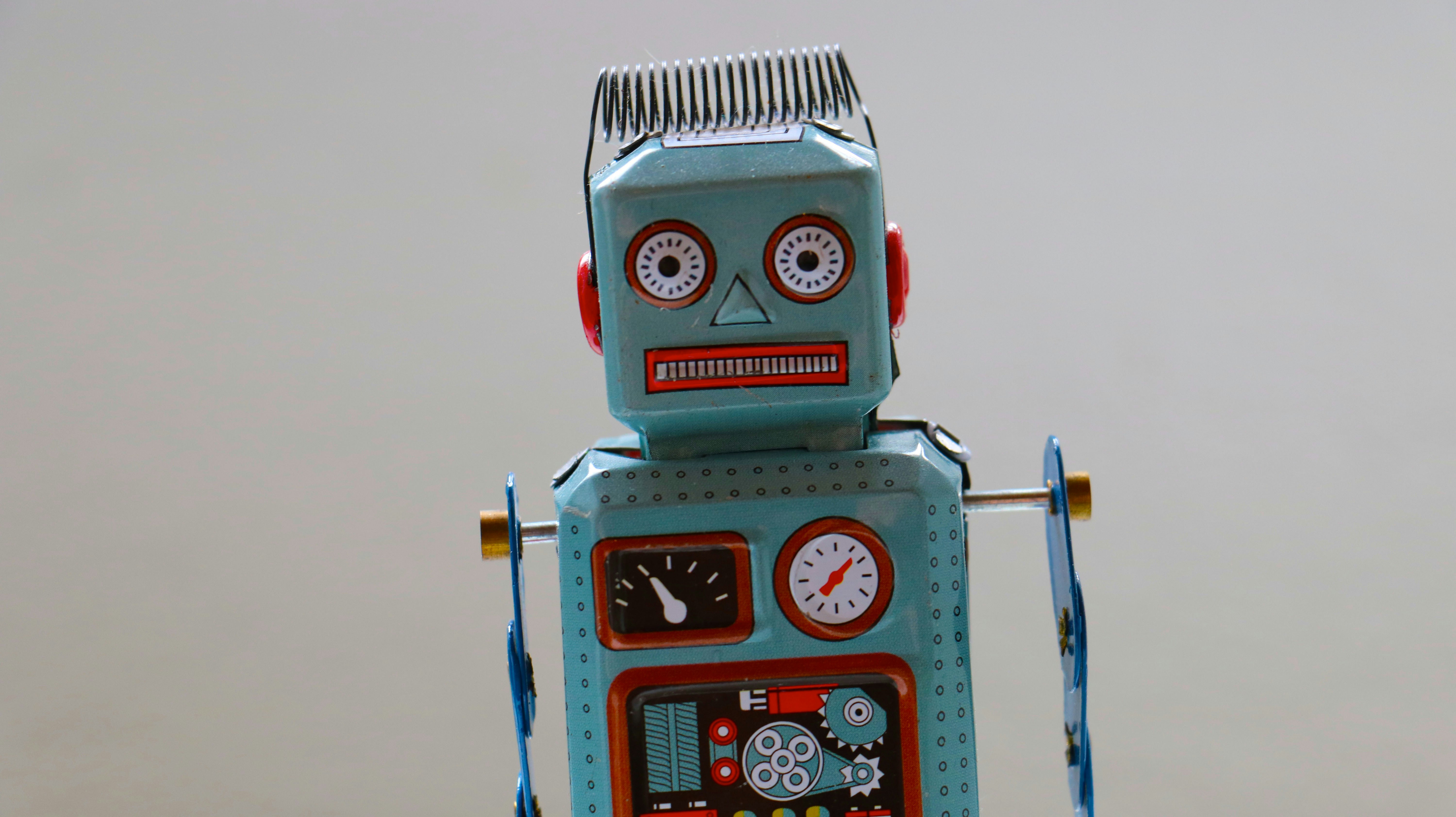

Connect to a custom local domain from Android emulator 29 Sep 2024 10:03 AM (6 months ago)

In the middle of September I have been at the Nextcloud conference and took the opportunity to join a workshop on Android dev (I still utterly dislike Android, but that is a different topic). Back in the day I already tried and had troubles setting up Android Studio, so why not trying to get it running while the Pros are around.

Turned out it simply worked after installation. No idea what I did wrong last time, maybe pushing the wrong button. However, it until hold until I tried to connect the checked out and running Nextcloud app against my local dev or test setup. I have the habit to run a few very custom domains that are only made known in the /etc/hosts file, cloud.nextcloud.com with TLS amongst others.

Android runs in some sorts of virtual machine or even docker container, and can connect to the host, but of course is not aware of any of the domains there. My second idea was to use dnsmasq as a DNS server and to specify this per -dns-server flag against the emulator. I did not work and I am not sure why. But I followed the first idea instead, and made it work eventually. This one is modifying the hosts file within the Android emulator.

And actually it is written in detail in Method 3 of this blog post by Jeroen Mols. Various other Internet sources coughSOcough have a shorter subset of these instructions that won’t work anymore. When you take Jeroens advice, eventually you will make it:

I took this few instructions and put it into a small quick bash script. It takes one argument (I did not include a check though), which is the avd that shall be booted. That is the virtual devices, and not all of them are working (the default one did not), as it is necessary to become root and temper with android’s filesystem. Pixel_6_Pro_API_VanillaIceCream is working well for me.

Otherwise the script assumes the Android Studio executables as on an Arch Linux install. Further on, it will copy the /usr/local/etc/hosts – place it there first. It should be something like:

127.0.0.1 localhost ::1 ip6-localhost 10.0.2.2 cloud.example.com all.your.domains

Find my android emulator launch script on Codeberg.

Thanks go out to Alper and Tobi for the help on site!

How I yearned for school holidays 31 Jul 2024 1:37 PM (8 months ago)

I was looking so much for the start of the school holidays of my kids. I have vacation only on two of their six weeks, nevertheless the remaining four ones give me a huge personal benefit. I well remember the days from their Easter holidays!

Of course the holidays bring their own challenges. They have to be taken care of, and are expressing their boredom in various creative manners. My key point is that I have the chance for sufficient sleep.

Chronotypes and sleep demands

People have their own circadian rhythm which is typically divided into morning persons (aka early bird), late risers (aka night owls) and, well, those in between. The preferences and so the differences in preferable times are, in my experience, often a factor acknowledged in finding meeting times, but also often a factor of conflict or stress.

It is said that a person needs between seven and nine hours of sleep. In Germany and 2022, a person of a couple with children was sleeping on average eight hours and fifteen minutes per day, the Federal Office of Statistics announced recently. This is a good number, yet I was surprised to read it. I am not getting close to it. And I identify as night owl.

During school days the alarm clock rings at 6.20am at night. When I want to make eight hours of sleep, I have to enter it at 10.20pm. I know that a many people actually go sleep by 10pm. This is not natural to me. I try to get ready to it on a work day by 10.30pm. Unless I am totally exhausted from prior days, I do not make it. I am not tried enough, and often there are still things to do at home.

Just get used to it? The oldest child has completed six full years in school by now. I tried, and failed, and tried again, and failed again, and the iteration continues. However, by now, the only perspective is to have the kids ready enough to manage themselves in the morning.

UK study on sleep affecting cognition

The matters of chronotypes and affects on every day life are topics of studies. One of the latest was done in the United Kingdom, with the focus of how different states have effect on cognitive results (and as usual, more research is necessary, of course).

For one, they state that »studies have shown that disruption in circadian rhythms, such as those from shift work or jet lag, negatively impacted cognitive performance,«.

For the other, "normal" sleeper yielded 6 to 10% higher cognitive results than morning person types, and night owls even more, 7.5 to 13.5%. Interestingly, »Sleeplessness/insomnia, however, showed no significant association in either cohort«. Well, when I am weary and tired, the error rate is definitely higher.

»Our findings highlight the complex interplay between sleep duration, chronotypes and various health and lifestyle factors on cognitive performance.«

Early bird regime

We know already for a good while that a school start early in the "day" is having bad effects on students (for example here. It does not benefit their learning, or development, or health. I did not find i particularly helpful when i was a teenager and my school started at 7.35am. I was having my stereo on max, any remote far from reach, and The Offspring with Mota (first track on Ixnay on the Hombre) blasting out, so I actually had to leave bed.

Also we had our teachers state after the last summer vacations that the start into school was difficult, because the kids turned back to their individual sleeping times, and turning back to the early bird regime was not easy, with the students being not much awake in class.

Nevertheless, I see teachers being also awake quite long, answering emails late in the day, ideally when they should be in bed already. Some of them also having kids. I am not surprised by the high rate of teachers burning out recently. I am a bit surprised by the politicians considerations to increase the work times, to further fuel (pun not intended) the problem.

In the primary school of our young one was a schedule restructuring necessary with four concrete options where preferences were asked for. Two would have kept the same starting time, while the other two proposals would have meant an earlier beginning. Fortunately there was a clear vote against starting even earlier.

Now I do not want to sound harsh, and the issues is not solved easily. Of course there are jobs that have to be fulfilled 24/7, or some just have fixed hours and have to be present on site. It also means that I would rule out professions were an early start would be mandatory (i.e. no teaching – we had a time where career jumpers were searched for to combat the lack of teachers).

Yet I hope we come to a point were we can make the world a bit friendlier and healthier for night owls. I imagine early birds can still rise early, and be busy with whatever is on their plates. They will have calmer work hours with less disruptions, or perhaps mind whatever else is on their plate. We have before-school care on site already, their hours might need to be extended, but perhaps the after school times can be shortened in return.

Prominent Night Owls

Then there is or was a trend to highlight various weird habits of prominent people, and morning persons were included there as well. And as there are so many "list of 22 night owl" articles already I out, I do not want to chime in.

So before you go and start your search, I just want to point out one person: H. P. Lovecraft, prophet of Cthulhu, icon of the horror fiction. (Don't worry, horror fans are just as nice and kind).

And let me conclude with a line of punk band Die Ärzte from their song Tu das nicht:

Unsre Kunst die erblüht und gedeiht in der Nacht

My Nextcloud Dev Setup 30 Jun 2024 4:45 AM (9 months ago)

(Sorry, this one is a bit of a longer post. Perhaps goes best with a cup of coffee or a bottle of Club Mate ;) )

Nextcloud can be run in combination with different software stacks, starting with the PHP runtime, to web servers, databases, memcache servers (or without), file storages and so on.

And so there are different ways how one can setup their Nextcloud development setup. The developer documentation provides a basis for this. A lot of devs are using Julius’s docker setup so they only need to mount their code.

I am going for a mostly local setup running on good old

evergreen Arch Linux. This setup allows for:

- multi-tenancy – having Nextcloud instances in parallel

- multi-runtime - having various PHP version available at the same time

- TLS enabled – easy and pragmatic certificates

- containerless - but you can add dockered components if you like or need

Mind, this is a development setup and in no way supposed to be used productively or exposed to the Internet. All of the installed services are configured to listen to localhost connections only!

Branch based code checkouts

The development process in the Nextcloud server git repository is built around the default branch and the stable branches for each Nextcloud series. The branches for the current series are stable29 and stable28 while the work on the upcoming version happens on master. The branch out to the next stable30 is going to happen with the first release candidate.

Typically a change is merged into the master branch first and the backported – adjusted if required – to the targeted stable branches. Sometimes fixes are only relevant to older branches, when the code has diverged. Then they go against the affected stable branch directly. It is also useful when debugging a specific version.

Knowing this it makes sense to obviously have the master branch cloned, but also relevant stable branches. I am running an instance for each of them.

Instead of cloning each branch, I take advantage of git’s worktree feature. The .git directory in the default branch remains central, all others become files pointing to the default one.

git worktree list … /srv/http/nextcloud/stable27 08cd95790a4 [stable27] /srv/http/nextcloud/stable28 fd066f90a59 [stable28] /srv/http/nextcloud/stable29 ed744047bde [stable29]

Apps

Apart of the server, there is also a range of apps that I am working on. Some of them are bundled with the server repository, but most have their own repository. I keep them separated in different app directories to keep some order:

- apps: only the apps from the server repository

- apps-web: for apps installed from the app store

- apps-repos: for direct checkouts

- apps-isolated: also cloned apps, but bind-mounted from elsewhere

The apps-isolated is an edge case addressing a trouble i was having with one app and its npm build. The npm tool has the behaviour to look for dependencies in the parent folders, and it was conflicting with path. Having it outside of the nextcloud server tree solves the problem. Actually, I could do this with in generall with apps-repos instead and have just one folder.

This requires a configuration in each instance’s config.php:

'apps_paths' => array ( 0 => array ( 'path' => '/srv/http/nextcloud/master/apps', 'url' => '/apps', 'writable' => false, ), 1 => array ( 'path' => '/srv/http/nextcloud/master/apps-repos', 'url' => '/apps-repos', 'writable' => false, ), 2 => array ( 'path' => '/srv/http/nextcloud/master/apps-web', 'url' => '/apps-web', 'writable' => true, ), 3 => array ( 'path' => '/srv/http/nextcloud/master/apps-isolated', 'url' => '/apps-isolated', 'writable' => false, ), ),

Only the apps-web folder is marked as writable, so apps installed from the app store will only end up there.

I am using git worktrees with the apps as well. Those that I need on older Nextcloud versions get also a worktree located in that folder, for example:

$ git worktree list /srv/http/nextcloud/master/apps-repos/flow_notifications 9c34617 [master] … /srv/http/nextcloud/stable27/apps-repos/flow_notifications be83bda [stable27] /srv/http/nextcloud/stable28/apps-repos/flow_notifications eefe903 [stable28] /srv/http/nextcloud/stable29/apps-repos/flow_notifications 9979422 [stable29]

In this example is an app, that also works with stable branches for each Nextcloud major version. But there are also others with branches compatible with a range of Nextcloud server version. There, I add worktree towards the highest supported version. I fill gaps again with bind mounts:

sudo mount --bind /srv/http/nextcloud/master/apps-repos/user_saml /srv/http/nextcloud/stable29/apps-repos/user_saml

Bind mounts are easier to work with in PhpStorm as they appear and are treated as normal folders. Symlinks however would also be opened in their original position, and PhpStorm will consider them not belong to the open project.

PhpStorm is the IDE of choice and there I also have a project for each stableXY and the master checkout.

PHP runtime

Using Arch I can always run the latest and greatest PHP version. That mostly works against master, but already Nextcloud 27 is not compatible with PHP 8.3.

Fortunately there is el_aur providing older PHP versions via AUR. They fetch the PHP and modules sources and compile on the machine, so updates may take a bit, but it allows to run them in parallel as they do not conflict with the current PHP version.

Later on for accessing Nextcloud via browser it is necessary to have the php*-fpm packages installed.

Effectively, it is “just” building the old PHP versions an Arch.

Web server and TLS

Now a web server is fancy, to reach all the Nextcloud versions in the browser with all the PHP versions supported.

I settle for Apache2 and have virtual hosts defined for each PHP version. For historical reasons I set up a domain in the form of nc[PHPVERSION].foobar (where foobar was my computers name back then). So I yield nc.foobar, nc82.foobar, nc81.foobar and nc74.foobar.

Each virtual host is configured like that:

<VirtualHost *:443>

ServerAdmin webmaster@localhost

ServerName nc82.foobar

DocumentRoot /srv/http/nextcloud/

Include conf/extra/php82-fpm.conf

RequestHeader edit "If-None-Match" "^\"(.*)-gzip\"$" "\"$1\""

RequestHeader edit "If-Match" "^\"(.*)-gzip\"$" "\"$1\""

Header edit "ETag" "^\"(.*[^g][^z][^i][^p])\"$" "\"$1-gzip\""

<Directory /srv/http/nextcloud/>

Options Indexes FollowSymLinks MultiViews

AllowOverride All

Order allow,deny

allow from all

Require all granted

</Directory>

LogLevel warn

ErrorLog /var/log/httpd/error.log

CustomLog "|/usr/bin/rotatelogs -n3 -L /var/log/httpd/access.log /var/log/httpd/access.log.part 86400" time_combined

KeepAlive On

KeepAliveTimeout 100ms

MaxKeepAliveRequests 2

SSLEngine on

SSLCertificateFile /path/to/.local/share/mkcert/nc82.foobar.pem

SSLCertificateKeyFile /path/to/.local/share/mkcert/nc82.foobar-key.pem

</VirtualHost>

The variable parts are the ServerName, the included php-fpm configuration, and the path to the TLS certificate.

The document root points to the general parent folder, so I access all instances through a sub directory, e.g. https://nc82.foobar/stable29 or https://nc.foobar/master

To deal with name resolution, I simply add the domain names to the /etc/hosts.

cat /etc/hosts # Static table lookup for hostnames. # See hosts(5) for details. 127.0.0.1 localhost ::1 localhost 127.0.1.1 foobar foobar.localdomain nc.foobar nc74.foobar nc81.foobar nc82.foobar cloud.example.com

And I have cloud.example.com! That is a plain instance just installed from the release archive for documentation purposes (taking screenshots with a vanilla theme) and such.

For TLS certificates I take advantage of mkcert. mkcert is a tool by Filippo Valsorda that makes it damn easy to create trusted self-signed certificates. It creates a local Certificate Authority and imports it system-wide. You can create certificates for any of your domains and wire them in the web server, reload it, done. Your browser will be happy.

Finally, I keep the web server configured to only to listen to local addresses, so that is is not available externally (firewall or not).

Database

MySQL is wide-spread, but Nextcloud also supports PostgreSQL. It seems also to have some nicer defaults and less hassle in administrating it. Also, many devs already work with MySQL. So, I settle for PostgreSQL.

By connecting through a unix socket, I do not even need stupid default passwords. I just create a database and user up front, before installing the Nextcloud instance, and done.

sudo -iu postgres createuser stable29 sudo -iu postgres createdb --owner=stable29 stable29

The database shell is just a sudo -iu postgres psql away and there is a quick cheat sheet “PostgreSQL for MySQL users”, that provides the basic information and is all needed for the first step into the cold water. The rest comes naturally on the way.

Memcache

I run Redis locally (and should transition to redict and also access it via unix socket. To do so, the web user, here http, has to be part of the redis group so that the web server and thus Nextcloud can open a connection.

The entries in the config.php are then simply:

'memcache.distributed' => '\\OC\\Memcache\\Redis',

'memcache.locking' => '\\OC\\Memcache\\Redis',

'redis' =>

array (

'host' => '/var/run/redis/redis.sock',

'port' => 0,

'timeout' => 0.0,

),

In order to run redis as unix socket, the port has to be set to 0 in /etc/redis/redis.conf. The socket path and permissions should be double checked to battle common first connection problems.

There should not be a material difference in redict, apart of the name, especially in the path and system group.

Some time ago, I also had to debug a case where memcached was used. In this volatile use case I have used memcache in a docker container instead and configured that. This can be thrown away, and quickly re-introduced again if ever necessary, but that’s not the daily bread.

Permissions

Remember that Nextcloud was cloned into a directory that actually belong to http and not to the actual user?

This setup requires that both http and USER * canreadallfilesandwritetosomeofthem. * http * mustbeabletowriteinto * data*, * config * and * apps − web*,*USER should be able to write anywhere.

Instead of adding $USER to the http group (does not feel right), I make use of Linux ACLs.

/srv/http/nextcloud is owned by $USER, but is generally readable.

For /srv/http/nextcloud/{master,stableXY} it is the same, but the defaults are also set that $USER will always have all access, e.g. when Nextcloud creates files in the data directory. Primary goal is to read or reset the nextcloud.log without hassles.

The annoying thing is that Nextcloud is very sensitive about config/config.php and overrides permissions, so using sudo to modify the config is necessary, but survivable.

occ

Using occ I was bored of typing sudo -u http so I had a short-cut for this. But then I was also getting tired of writing suww php occ and figuring out the right php version depending on which branch i was. So I created a script that will found it out for me, and I can finally only run occ – which is what the script is called – and am good wherever I am:

#!/usr/bin/env bash

# Require: run from a Nextcloud root dir

if [ ! -f lib/versioncheck.php ]; then

echo "Enter the Nextcloud root dir first" > /dev/stderr

exit 255;

fi

# Test general php bin first

if php lib/versioncheck.php 1>/dev/null ; then

sudo -u http php occ $@

exit $?

fi

# Walk through installed binaries, from highest version downwards

# ⚠ requires bfs, which is a drop-in replacement for find, see https://tavianator.com/projects/bfs.html

BINARIES+=$(bfs /usr/bin/ -type f -regextype posix-extended -regex '.*/php[0-9]{2}$' | sort -r)

for PHPBIN in ${BINARIES}; do

if "${PHPBIN}" lib/versioncheck.php 1>/dev/null ; then

sudo -u http ${PHPBIN} occ $@

exit $?

fi

done

echo "No suitable PHP binary found" > /dev/stderr

exit 254

It simply tries out the available php binaries against the lib/versioncheck.php and takes the first one that exits with a success code.

Other components

Just briefly:

I run OpenLDAP locally, and this is typically configured in my instances. It has a lot of mass-created users, and allows to create a lot of odd configurations quickly, because creative things are out in the wild. Having them in different subtrees of the directory helps to keep them apart.

Further I have a VM (using libvirtd/qemu) with a Samba4-server that not only offers for SMB shares (don’t want that locally), but also acts as Active Directory compatible LDAP server. I don’t need it often though.

Then, there is a Keycloak docker container in use to have a SAML provider on the machine (but I also have accounts at some SaaS providers, though I cannot really imagine why you would ever want to outsource authentication and authorization).

If necessary I spin up a Collabora CODE server, which then also runs locally, but only on demand.

Ready for takeoff

When I am ready to (test my) code, I execute devup and it does something like this:

sudo mount --bind /srv/http/apps/dev /srv/http/nextcloud/master/apps-isolated sudo mount --bind /srv/http/apps/26 /srv/http/nextcloud/stable26/apps-isolated sudo mount --bind /srv/http/nextcloud/stable27/apps-repos/user_saml /srv/http/nextcloud/stable25/apps-repos/user_saml sudo mount --bind /srv/http/nextcloud/master/apps-repos/user_saml /srv/http/nextcloud/stable29/apps-repos/user_saml sudo systemctl start redis sudo systemctl start docker || true sudo systemctl start slapd sudo systemctl start postgresql sudo systemctl start php-fpm sudo systemctl start php82-fpm.service sudo systemctl start php74-fpm.service sudo systemctl start httpd sudo systemctl start libvirtd

Essentially I set up the bind-mounts first, and later on start related services. There is a devdown script as well, that stops the services and unmounts the mounts.

Advantages and pitfalls

This setup allows of development across various branches and their different requirements without fiddling with other services or components, apart of some initial setup (e.g. new server worktree) or occassional routine tasks (e.g. new PHP version). Instances are set up ones, but otherwise persistent.

By being persistent it allows some undirected, but closer to reality testing: different configurations, combination of apps, and in situ data let you let you uncover effects, that otherwise may not happen, if you have a very clean, very minimal installation. In-series upgrades may make you aware of problems that arise and are not detected by automated tests.

Having varying developer setups across the developer base is inherent testing of various possibles ways of composing the stack. For instance both a colleague and I have stumbled across different database behaviour in MySQL or Postgres respectively and could address them before a release.

Lastly, setting up the different components of the stack makes one being more involved in them. When it is not ready-to-run off the shelf, one is going to understand better how to this stuff works – and it is helpful to have a good idea of the foundations of the stack.

Each medal has two sides of course. This situation sometimes is also a source for PEBCAK moments. The federated sharing of addressbooks did not work because of some odd race condition in seting up the trusted relationship between server – but because I disabled receiving shares while testing some case a bit ago.

Or, structural changes are done, but maybe reverted (for example in database schema). While nothing would happen in a regular update, the intermediate change might be effective and has to be reverted manually. And you need to be aware about it. I happens once every three years maybe, and if you are unlucky enough to run into this situation, but it might happen.

Occasionally, I do not have that need to often, I setup an ad-hoc instance on a memdisk (/dev/shm/). The use case is either to closely replicate a configuration for debugging reasons, or to investigate an issue during upgrading. Especially then it is easy to roll back (initialize a git repo and use sqlite if possible – with other DBs they have to be dumped).

This is the setup that has crystallized and improved over time since I switch to Arch Linux (via Antergos back then – was it 2016?). I am comfortable with it, and I would call it battle-tested. Perhaps it is not the best choice for someone only interesting into developing a couple of apps, it has benefits with a server focus or a bunch of server experience.

Impressions of Budapest, 2024 29 Apr 2024 1:03 PM (11 months ago)

This year in March I went with my beloved ones for a week-long trip to Budapest, Hungary. It was the first time for all of us to visit this city. Here, find my impressions, things I learned, along a few tips.

We stayed at the Seven Seasons apartment hotel pretty much in the city center. In the spacious two-room apartment we had a room for us, the kids stayed in the living room, amd there was also an equipped kitchen, well suited to cook some children compatible food. The hotel also offered a good breakfast buffet, we were satisfied.

The city

Hungary's capital consists of two parts on both sides of the Danube river. The hilly part is Buda, with its castle and president's palace, while the flat part is Pest with the parliament building and Jewish quarter. Both parts are connected with bridges, especially the Széchenyi Chain Bridge being monumental and beautiful, and just recently renovated. The location was quite popular in the very old days, and so there are also some Roman remnants visible, two amphitheatres on the Buda side (unfortunately visiting them did not fit out plans).

Our radius in this one week was pretty much downtown. We reached all destinations from our hotel by foot, apart of the zoo to which we used the subway. One downer was that kids were not allowed in all indoor aqua parks, which resulted in complaints from the kids – hereby they are forwarded ;)

There are some parks and greenish places all around the city, nevertheless there is a big amount of car traffic in all the streets, and some of the drivers are taking their right of way (but my perception is that car drivers are becoming more ruthless generally). What else they have of abundance are tourists. Downtown is crowded by tourists where ever you look, and that part is clearly living off it. Happens.

I and urban places

When I look at a city, I am interested on how people lived and live, how the different parts of society behave, and especially the areas and people that are not the elite, and not the big monuments. The combination of the city's history as well as the contemporary situation.

Now, while Budapest is apparently often compared to Paris, I on the one hand am reminded of Belgrade, although on the other hand I see the a lot of differences as well, but it sticks. Also, I cannot say much about a comparison to Paris, as I have not been there yet.

Like Belgrade, Budapest is located at the Danube and throughout history it was an important location, and also with ongoing struggle and different rulers. The symbol for it is the citadel on the Buda side, which was used by the ruler to keep the population in check.

I like to wander around in the city, look at the houses, the people, the graffiti. Especially graffiti: whether it is artful, or whether they are paroles. In Belgrade (if you allow me the detour) you could see graffiti being put up and being contested night by night. There was something the society had to broker. They have astonishing murals, and you could feel the vibe (if you are interested, I recommend the Street Art Belgrade book). In central Budapest however, this was amiss. I do not recall to see any graffiti of meaning, if at all. There is a good number of murals, many commercial, and only very few of them were impressive. We used this article on Budapest Flow as starting point. Quite likely we were just in the wrong place for good graffiti.

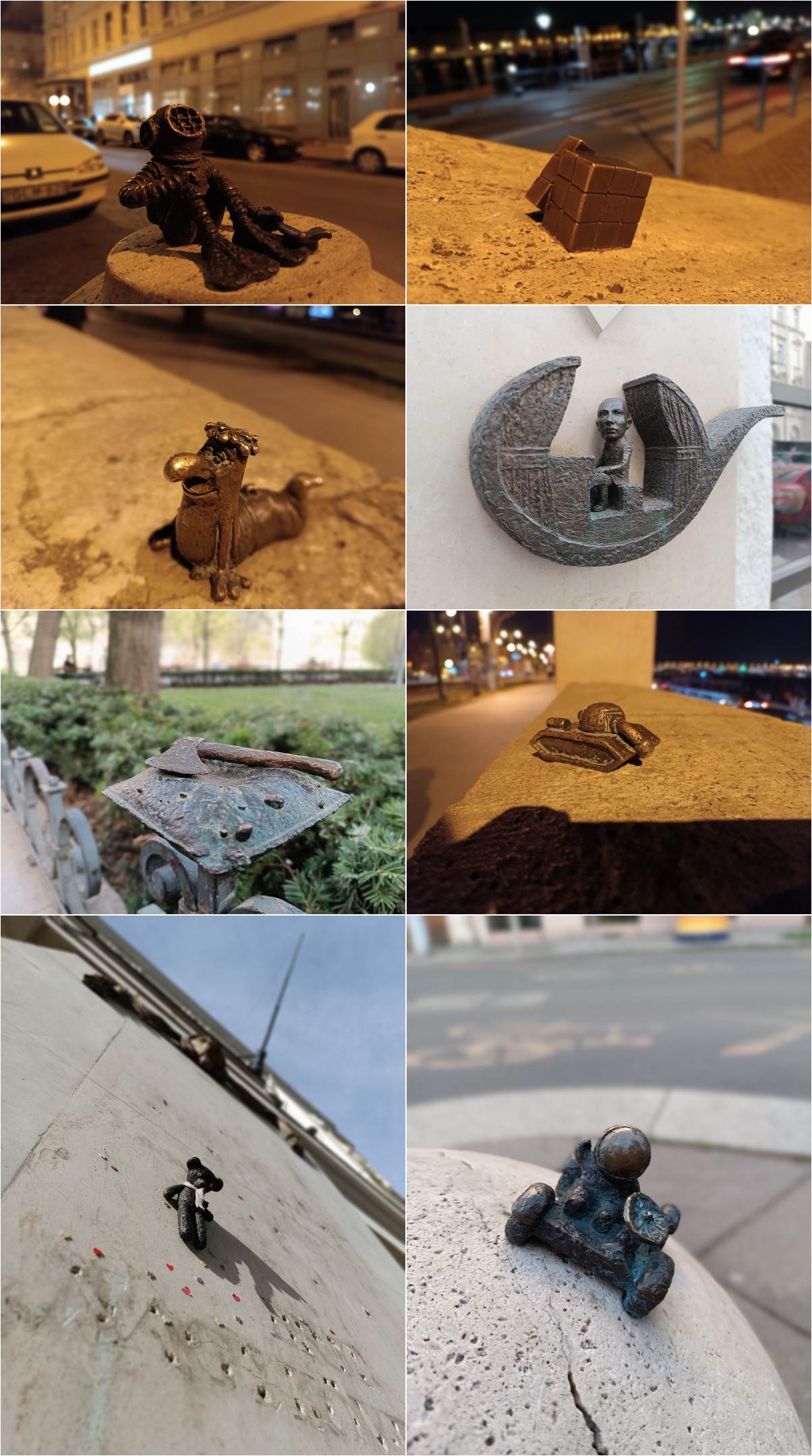

However, another type of street art was enchanting us. It were mini statues crafted and placed by Mihály Kolodko all around the city. First we found "the diver" by chance, another day we went looking for some of them more directly. It also a lot of fun for the kids to discover the "hidden" small statues. They all have some background story, and again Budapest Flow has an article on the hidden mini statues that we used as reference.

In downtown Budapest are a lot, really lot, of old houses, full of fine ornaments. Those buildings however are in very different states. Some are well-preserved and taken care of, some seem to run down. And occasionally you spot a facade, where only some parts (belonging to apartments) are refurbished, while the rest is neglected. As a local friend told us, all the entertainment places move to this part of town, while being run by companies registered in other parts – the district is actually poor. And the people do not have the means or not the interest to maintain them, there is no subsidies or other kind of support. Besides, more and more apartments are turned into holiday apartments, and so even less locals will have a find a place in that part of the city.

Sightseeing

The best sight is the city itself by night, when all the places at the river banks and up the hill are illuminated. That's just beautiful. At day time, there is no such magic.

Buda

Like a many half-way big cities with a hillside have a castle, so does Budapest. In order to get there, you can walk up the hill. There are ways for pedestrians that look quite nice, especially on a sunny day. We had to take the funicular however… kids today. That funicular was destroyed in the second world war by German occupation forces, but was reopened in 1986. It definitely is a shortcut with a view and goes right up to the top between the castle and the presidential palace.

At the castle it is possible to walk along the walls and have a great view on the city. Currently there are lot's of construction sites at the castle, though, and not everything is accessible. We thought about visiting the castle museum on site, but decided against it. The weather was quite lovely so instead we checked out the yards and continued towards the Fisherman's Bastion. It's beautiful building just as well, and also from there you have a great view over the city. From there is possible to walk down the hill, but with our kids of course we went back.

…and had the chance to see how a Japanese delegation was welcomed at the presidential palace with some military honors, if you call them like that. There were soldiers doing their salutation ritual, while quite a crowd was watching this spectacle, before the delegation was entering the palace.

We skipped the citadel, due to lack of time, but I heard there it is also full of constructions sites.

Pest

The jewel on the Pest side and also at the river bank is the magnificent parliament building. It's recommendable to book a ticket in advance as it is quite popular and so slots are rare. A guide will lead you through the building and give information about it's history from planning, to construction (opened in 1902), during the wars and until nowadays. It is one of the largest parliament buildings and used to house two chambers of the institution in both wings. Since the old aristocratic Upper House does not exist anymore this part is used for the tours, but also for conferences and meetings.

A research on Budapest will reveal that there a thousands of different kinds of museums. We decided to visit the cat museum. It is a lovely, calm place in an former two-story apartment. Also here it is best to look for tickets in advance as they are valid only for a ninety minute time slot and they make sure that not too many visitors are in the museum. A dozen cats roam there, while there is enough read and see as well. The atmosphere is really relaxed and there is possibility to just chill on a cozy spot and pet a cat. Kids are welcomed only starting the age of 8 years. A few minutes away there is also a cat cafe, but even though we wanted, we did not manage to visit it.

We walked a lot in the Jewish quarter, for one because it was close by, for the other because a lot of things were there – big part of the street art, some mini statues, food places. Well, and of course the main Synagogue, for instance, but also some Jewish restaurants and institutions. What is also placed there is the most famous ruin bar of Budapest, Szimple Kert. It's definitely fun to walk through it, but it really does not have anything of its old purpose. Ruin bars used to pop up in abandoned factories, serving affordable beer, for instance. Probably unsurprisingly the audience changed, and the prices just as well – i would say it is rather another hipster place. Still, fun to go through and explore that area.

Danube

Apparently everybody is taking part in a river cruise there. Different companies have different offers, including dinner and live music, but most tours happen during the day. Have one after dark. You will be able to go on deck and gaze at the marvellously illuminated city at night. It is also a great opportunity for taking some pictures.

On the river banks, on the Pest side, is also one of the memorials for the Jews being shot at the river, during German occupation in World War II by members of the fascist Arrow Cross Party, that was put in power by Nazi-Germany. The Shoes on the Danube are placed there in memory of the 20.000 people who were executed at the river bank, tumbling into Danube.

There are more memorials than this, and it is infuriating for me to travel around and find traces of fascist terror from Kaliningrad, through Budapest to Belgrade. To those who are supporting or relativizing such parties from out time – this is what they stand for, and there is no fcking excuse to be their stirrup holder. They are beasts.

Flower power?

What struck us was… that there were artificial flowers everywhere. In the apartment (OK), in the breakfast place, in the restaurants, even sometimes on the streets. We did not find an explanation for it, but it was striking. Very strange.

Food tips

To conclude, where to get what?

Find great Hungarian food with vegetarian options at Klauzál Café. It was a recommendation by a local friend, and I am happy to pass it on!

The hummusbar chain that can be found in couple of places has great oriental food and also the inconspicious Italian restaurant Mia Valentina was surprisingly good and the staff absolutely friendly. We were not so excited about the places around the castle.

For a sweet snack along the way, it is not possible not end up having a Chimney Cake in your hands. Simple, yet delicious. I swear on those with Coconut, the kids favour them with poppy seeds. I am not sure you can have bad ones, but the prices are sometimes quite proud. At a booth between Bajczsy-Zsilinszky-Utca and Andrassy utca (not far from Erszebet square) you can get hold of them for just 800 Forints (that's roughly 2 EUR)!

There also millions of choices for tasty cakes. We should have tried all, but somehow did not. We found The Sweet which also is open until 8pm and their cakes are delicious!

Further reading

As spotted on the Fediverse, there was also someone else in Budapest in that time (surpise, right), and he, too, blogged about it (in German) – check it out for another perspective and a lot more pictures.

Choosing a private pension component 30 Mar 2024 1:36 PM (last year)

There are a lot of things out in the world that catch my interest and curiosity. That I like to dive into, and learn more about, and get excited about. Contemporary financial things are not one of them. And also I only have limited trust, to put it polite, in the various actors around financial products. It feels like swimming in a shark pool.

There are chores in life that just are not fun, but are better done anyways. So with all the discussions that we are having about the pension in Germany, and all the bits of information that went in and out my head (with whatever was stuck) over the passed years, it ultimately felt like it is needful thing to act on – financing the retirement.

Und wenn alt werden sich noch lohnt

Warum muss meine Mutter dann

Mit 74 Jahren noch ran

Und jeden scheiß Tag zur Arbeit gehen?

"Von unten nichts neues" by Pascow

So state pensions will not be that much. Inflation will eat its share. Taxes will be going off. Consultants are talking of a gap between the money you need to finance your life(style) and the money you will have available. It is coined Rentenlücke and one might have the idea to close theirs.

The scenario

Back at my first job, I signed up for a Betriebliche Altersvorsage, which I do not understand much about, to be honest. Nevertheless, I have it, and it is a stable addition to the state pension, and meanwhile it also has to be subsidized by the employer a bit. And yet, still the summary of expected state pension and betriebliche Altersvorsorge are not the same as today's salary.

Or whatever my future expenses will be. Likely not feeding the kids, but how all other costs develop though, and what happens along the way, that's a blank pages and not really predictable.

Not inheriting rich and not having much on the accounts either, the options are already limited. With less then thirty years ahead, there is also not too much time for very risk-free options, as interest rates are pretty much down.

Everybody is talking about ETFs

And so the spotlight turns to something with shares.

They™️ are talking about ETFs for a good while now. Some independent institutions, like Finzanztip, keep recommending it. ETF is short for Exchange-Traded Fund and that does tell only little more than the pure abbreviation. ETFs are set up by financial institutions and try to resemble or follow a sort of index, which again are set up to track the performance of a stock market. And that can be formed to follow a region (even down to countries), sectors (a couple of related industries) or stock markets (e.g. NASDAQ) for example. They can be formed with different criteria, even including being "ethical" or "sustainable" – so much the theory.

Some friends I talked also decided that ETFs are a worthy solution, and are investing their money there. Altogether it does not look too much of a scam, this type of product is already there for a decent amount of time, and I did not sense any fundamental hooks.

But.

The conscience awakens

Personally, when I act, I try to take into consideration what the consequences are and what and who I support with my decisions. I care about the well-being of others, and about the future of our living space (the planet should be liveable for my children as well). Ethical and sustainable factors are important to me.

Whether or not to consider shares is such a first question. On a trade exchange there are no small companies, and the larger the corporation, the more evil it is. Buying shares is supporting them – not directly as they themselves do not see money when shares are bought (unless they are freshly handing them out), but indirectly by increasing they evaluation and thus enabling them to fund money more cheaply and easily. This strengthens their economic power and possibly political influence.

Also, it is cumbersome to purchase and deal with shares individually. Apart of the missing interest in this topic, the risk can be spread only so much around different hand-chosen companies. Each transaction also has their cost, making this approach less efficient.

ETFs and Investment Funds do not have this disadvantage. The provider of the ETF or fund is buying everything on the investors behalf. With the leverage of a lot of monetary resources the can buy more shares, of more companies, and reduce the risk. However, with that power given they also act as share-holder – as co-owner of the company they will also exercise the rights on the customers' behalf, ultimately in their own interest.

And there was risk

Risk was mentioned already before, but how does it manifest? It boils down to the variations in the stock prices. Eventually profit can be realized only when selling the shares, and at a higher price than they were bought.

Promoters of share-related products keep saying that there is a risk, but over time, given enough time, the graphs go uphill and you will end up with a profit when investing ten years or more. Crisis happens regularly, of course, but eventually the stock prices will go up again, and go higher again.

Part of the story is also that there is change, companies die and develop, and so do industries, and so entire regions have hard times, and good times. The recommendation is to diversify. So best is to get a mixture of everything from everywhere: any region, any industry. If single companies or places have issues, the size and collection of the fund will equalize shortcomings.

Ingredients

There is a lot of documentation about products like ETFs and investment funds. Several facts, numbers and also goals, intentions and target groups are included in there. In the end, the selected fund should – apart of being diverse - be not too young and and not too small in terms of how much money invested so far. This should give certainty that a fund will not be resolved – as an investor it is not an issue per so, but one probably does not want to deal with that, and taxes may apply.

Then also the the biggest items of the portfolio are listed as well as the region. One can see in which companies the investments went so far and also into which regions. Then even when you chose to see "sustainably" funds only, watch out for red flags. I found Nestlé. And the majority contains GAFAM Google (Alphabet), Amazon, Facebook (Meta), Apple and/or Microsoft shares. When concentrated, this is already a risk (US tech sector), but those companies also represent everything I despise.

In fact, after sifting and sifting and sifting through ETFs, I had a shortlist list where each and every candidate was having a drawback. I was not happy, not satisfied, but had stomach aches and decided for none of them.

Actively managed Investment Funds

ETFs are having other advantages on the efficiency level. Especially unmanaged ones – those that are controlled via software as they follow an index only anyway – have little side costs. So they do not cut into your yield too much, as long as you pay attention when choosing them.

Since I lost my appetite for ETFs anyway, I looked out for actively managed funds. There are humans involved, typically they do not perform as well normally, but there seems to be a much bigger choice of funds. And so I could find one that was sufficiently convincing, not too expensive, and not obviously holding despicable positions. The fund is also managed by one of the lesser evils. And I have a savings plan where I funnel a small amount monthly into that fund.

How I feel with that choice

My impression is that I understand sufficiently enough to make a decision, and to make this decisions. I do not have stomach aches with that. During the research process I did not rush things, I slept over some ideas, and corrected some on the way, all in all it took me weeks from concrete research until action.

I did not turn the whole thing into a rabbit hole either. I could investigate in several directions more deeply, or could try to calculate some aspects more accurately, but there have to be some lines where to stop, at some point it is good enough (and there I other things in life I want to spent my time on).

Whether this is the right decision, I still have no clue. There is a good amount of uncertainty, especially in this political and environmental climate. It is an attempt to prepare, and I come to the conclusion it is reasonable, yet altogether I am still unsure to some degree. But it is okay. And perhaps I am overthinking this already, which might be just a boring, ordinary and obvious action for anybody else. Perhaps it is just a case of growing up with a German savings book mentality ;)

Feb 19 18 Feb 2024 4:14 AM (last year)

Gökhan Gültekin. Sedat Gürbüz. Said Nesar Hashemi. Mercedes Kierpacz. Hamza Kurtović. Vili Viorel Păun. Fatih Saraçoğlu. Ferhat Unvar. Kaloyan Velkov.

Four years ago, they were murdered by a racist in Hanau, Germany, a town I have never heard about before. Children lost their mother or father. Parents lost their children. Siblings lost their others. And of course friends lost their friends. Their lifes are broken and they are suffering to this day.

The cowardly attack happened on February 19. Since then, every year, when February comes close, the memory of the tragedy creeps coming back to my mind. It makes me sad – young people robbed of their lives, their families' and friends' lives destroyed, for nothing, but crazy shit insanity.

It makes me angry and furious about the sludge in which such a monster can grow, fueled by conspiracy tale-tellers, terrorist parties like the AfD, but also politicians taking over their words, and racist structures e.g. in the police.

While this is nothing remotely close to what the victims and their dear ones experienced, the murderer (with all his accomplices, direct or not) also ruined the joy i should have at my birthday.

It was not the only assault in the recent past, and there are far too many. The bloody trail of the NSU, the unretaliated murder of Oury Jalloh in police custody, the racist and antisemitic shooting in Halle, and you could go on and on.

Nowadays, people in Germany are going to hundreds of thousands, millions altogether, on the streets, taking stands against racism and lighting beacons of hope against the political atmosphere. Anti-constitutional, neo-fascist parties like the AfD must be banned, and racist structures in police and other official bodies have to be smashed and dissolved.

Gökhan Gültekin. Sedat Gürbüz. Said Nesar Hashemi. Mercedes Kierpacz. Hamza Kurtović. Vili Viorel Păun. Fatih Saraçoğlu. Ferhat Unvar. Kaloyan Velkov.

Say their names.

Isolating Nextcloud app dependencies with php-scoper 24 Jan 2024 9:26 AM (last year)

In software with plugin capabilities, typically in interpreted languages, it is possible that plugin have dependencies included, that may collide with dependencies included in core product, or in other plugins. It is not a problem when the same version is used, but in turns into a problem when different versions that are incompatible to each other are shipped. The end user may face bugs. Developers speak of the Dependency Hell.

In Nextcloud these plugins are the various apps, coming from various sources.

Example:

- The SharePoint backend indirectly (dependency of a dependency) ships firebase/php-jwt in version 6.10.0.

- The SAML/SSO backend ships firebase/php-jwt in version 6.8.1

- By loading their classes dynamically, it is unclear which will be loaded

- … but both cannot be loaded twice – there cannot be the same classes twice

- I do not know whether these versions are compatible now, but if they were…

- … it might look very different with the next update

While managing dependencies is not exactly a new thing, the existing solutions in PHP land are not that manifold or feature rich. Some apps use mozart for that purpose. mozart was oriented on WordPress plugins, though, but it is also not in active development anymore.

The other available tool is php-scoper and this is what the blog post is about. From my example, one direct dependency pulled in the colliding one in a new version. To ensure that there will be no shenanigans caused, I had to isolate my apps dependencies. To scope them in other words.

This is achieved by moving the dependencies into my apps namespace. By doing so, the dependencies will not be loaded anymore with their original namespaces class name, but the app-specific one. So, they can be loaded multiple times and not interfere with each other.

As we did not have prior art with php-scoper, I could explore my way into it.

Objectives

First, an idea and plan will be the foundation that paints the picture of the desired outcome. And in order to not totally reinvent the wheel, I looked at a battle-tested app, Nextcloud Talk, which is using mozart, and does some very sensible things altogether. I formulated my objectives.

Targeted Namespace: \OCA\{AppId}\Vendor\{OriginalNamespace}

As hinted in the introduction, the dependencies will have to have an app-specific namespace, and this is how it shall look like.\OCA is the conventional and mandatory namespace prefix for apps in Nextcloud, followed by the app id with a capitalized first character.

The next parts follow and replicate the folder structure, and by placing the dependencies in Vendor makes it obvious that these components are coming from third parties.

Without scoping, they would just remain in the vendor directory at the apps root.Development dependencies are ignored

They do not matter as they are not shipped with in a proper release.

In theory, you may have collisions in your development setup, and occasionally it is awkward to figure this out. But it happens once in a year with bad luck, and is not worth the effort. Probably it would make some activities even worse. So, not worth the effort.lib/Vendor not committed into git

This path, relative to the app project root, will be the parent location for the dependencies. In Nextcloud apps, lib/ is typically the source root – the starting point for \OCA{AppId}\.

For I do not desire to have the dependencies committed to the git repository, this directory shall also not appear there. Instead the package management meta data is committed and used to fetch the code on demand.Optimized autoloader with dependencies included

For the dynamic loading of the source classes, called autoloading, the package manager composer can dump the class information for a quick lookup. The dependencies have to be there with their prefixed namespace.App is able to run after composer install [--no-dev] After installing the dependencies based on the stored meta data, all the scoping steps should be applied immediately, so the app is ready without any further ado. The same is valid updating the dependencies with composer update. Any additional manual steps should be avoided for a good and consistent developer experience.

Successful Packaging

Obviously, the production dependencies and autoload information should be at the destination places when creating the release archive.

Implementation

The road to get to the final result was a little bumpy for different reasons, but I will not bore with this. I outline the key steps with details where needed.

Adding php-scoper to composer

First, we make use of the bin plugin for composer as a production dependency, because we need php-scoper to also run no development-dependencies are installed – think building the release archive, or running the app without all the dev tools. So the bin plugin allows us to have php-scoper along production dependencies, but being out of the way (and easy to discard).

composer require bamarni/composer-bin-plugin composer bin php-scoper require humbug/php-scoper=0.17.0

I had to pull php-scoper in version 0.17.0, because it is the last one that supports PHP 8.0 properly. While not a production dependency, it still runs when installing dependencies, e.g. on CI. If you do not have this restriction, a later version will suffice. For some versions of php-scoper do not state the correct PHP requirements or lack compatibility, some testing and trying may pave the way.

Configuring php-scoper

The default configuration file name is scoper.inc.php and is expected to be located in the project root directory. The namespace prefix can be defined there, as well as the directories should be arranged. Some more tricks are possible there, but I did not need them. I might have used the output-dir flag, but it is not available in 0.17.0. php-scoper will write the adjusted files to build/. For we have to move around the results anyway, it is not a big thing.

My resulting config is essentially:

<?php

declare(strict_types=1);

use Isolated\Symfony\Component\Finder\Finder;

return [

'prefix' => 'OCA\\SharePoint\\Vendor',

'finders' => [

Finder::create()->files()

->exclude([

'test',

'composer',

'bin',

])

->notName('autoload.php')

->in('vendor/vgrem'),

Finder::create()->files()

->exclude([

'test',

'composer',

'bin',

])

->notName('autoload.php')

->in('vendor/firebase'),

],

];

Some folders are (test, composer, bin) should be ignored, as well as custom autoload.phps. I was handpicking my two selected dependencies, so scoper would leave dev-deps alone. This might be not be the best approach when having a big number of dependencies.

Placing the adjusted deps to the final destination

While php-scoper is changing the sources and patching the namespace where required, it does nothing to the directory structure. This is a step I had to do, and wrote a script that adjusts the original top level directories to become compliant with PSR-4. For example:

We find:

build/vgrem/php-spo/ build/firebase/php-jwt/

and they should end up at:

lib/Vendor/Office365/ lib/Vendor/Firebase/JWT/

This is a structure has to derice from the ['autoload']['psr-4'] data out of each dependencies respective composer.json file. The structure informs about the (new) namespace as well as the sources root.

So the process is to,

- iterate over the organization directory

- iterate over the project directory

- read out the psr-4 information from the composer.json

- create the destination path (and make sure it is empty)

- move the source to it place

- clean up the rest

While at PHP I wrote it in PHP and stored it in the project root. It is a bit lengthy, so check it out on the code platform.

Combining it all

Now that my yak is nicely shaved I can put the last pieces together. composer post-install and post-upgrade scripts will run everything that is needed to pull the dependencies and make them ready.

"post-install-cmd": [

"@composer bin all install --ignore-platform-reqs # unfortunately the flag is required for 8.0",

"vendor/bin/php-scoper add-prefix --force # Scope our dependencies",

"rm -Rf lib/Vendor && mv build lib/Vendor",

"find lib/Vendor/ -maxdepth 1 -mindepth 1 -type d | cut -d '/' -f3 | xargs -I {} rm -Rf vendor/{} # Remove origins",

"@php lib-vendor-organizer.php lib/Vendor/ OCA\\\\SharePoint\\\\Vendor",

"composer dump-autoload -o"

],

"post-update-cmd": [

…

]

Those commands are executed every time after a composer install or composer update is run – nifty. At first, php-scoper is installed through the bin plugin. Unfortunately, I have to ignore platform (PHP version) requirements to cover running on PHP 8.0 to 8.3. Try your case without that first.

The second command actually runs php-scoper, by using the configuration it finds under its default config location.

Then, we make sure that lib/Vendor is deleted and we rename the resulting build/ to this path.

The next command just cleans up the original directories in vendor/, php-scoper does not do that. We want to be sure to have no collision during our development work, so the traces of the treated dependencies should vanish (and only those).

Subsequently our script is run that adjusts the final paths. It takes two arguments, the source directory, as well as the namespace prefix. This helps avoiding hardcoding such information.

Eventually the autoload information is generated and dumped.

The update script is identical, therefore you see the ellipsis in the snippet.

Updating the owned code

What the scoper did not do for you is to adjust the namespaces in your own code files. So everywhere where classes from the old namespaces were required, they have to be prefixed with OCA{APPID}\Vendor\.

Working on a small app I did this manually in PhpStorm. With the Column Selection Mode it goes quick (one paste per file) and I see possible issues immediately. Using sed combined with (ack-)grep and/or find could be a quick alternative. Or perhaps getting deeper in the php-scoper configuration could reveal some automatic options.

Packaging

I use krankerl for packaging Nextcloud apps, and the only thing I had to adjust was to add some directories to the .nextcloudignore file, so they will not be included in the release archive. The directories added were lib-vendor-organizer.php, scoper.inc.php, vendor-bin, vendor/bin and vendor/bamarni. Otherwise the vendor directory contains the autoload configuration, which I keep at the known position.

In krankerl's configuration I was having composer install --no-dev as the sole before packaging command, and that is all that it takes (at least if you do not need frontend-code voodoo).

Push it

Do not forget to add lib/Vendor to .gitignore.

Commit everything, push it to git and let CI run over it. Fix or adjusts tests if they are not green. I did additional smoke testing. This all depends on your test coverage and possible test cases.

Different approach

While I was working on my solution, unbeknownst Dear Marcel was working on his, which resulted in a slightly different approach. Key differences are:

- Using latest php-scoper and covering only PHP 8.2 or 8.3.

- Placing the dependency directly into lib/ (the use case contained only one dependency)

- Replacing the namespace in code using sed from within the composer scripts (seems worthwhile!)

- Additional patcher in the php-scoper configuration to replace namespace in comments within the dependency codebase (also a great idea!)

Caveats and limitations

I find the series of commands in the composer script a bit hacky, and also I had to write the script to arrange the final directory structure. I would have loved if php-scoper did it itself (contribution welcome i suppose) – what i believe mozart is doing out of the box, if I did not oversee anything.

What can always be a pitfall is when dependency code is creating or instantiating classes dynamically, using string concatenation for instance. This is something to watch out for, and it might make it very tough to scope some dependencies.

Marcel encountered an issue with current versions (0.18.*) of php-scoper that lead to problems with autoloading specific files that do not contain classes, just namescaped functions. A manual require_once does solve that. A fix is already merged and will be shipped with a v1 release of php-scoper.

Recapitulate

- Install humbug/php-scoper through the composer bin plugin, which must be a production dependency.

- Configure php-scoper

- Add the lib-vendor-organizer.php script

- Configure composer post-install and post-update scripts

- Adjust your own code

- Test release building, run CI, do some smoke testing

Cutting down memory usage on my Sailfish app 26 Dec 2022 2:58 PM (2 years ago)

For a while the growing memory usage of my Nextcloud Talk app for SailfishOS was a kept in the back of my head. For the longest time the potential leak was an occasional observation: when looking which apps or processes may hoard memory. My app was never slim, and it looked it would only grow in RAM usage.

So this was a problem I wanted to tackle for a bit, although I was lacking tooling (was already digging there without much success) and in depth understanding of the technology used (after all it is a side project with pragmatic goals with very limited time resources).

Then, the other day Volker Krause blogged about best practices on working with the QNetworkAccessManager. Suddenly, reasons for the memory leaks were all to clear, and a path out laid out nicely.

Situation overview

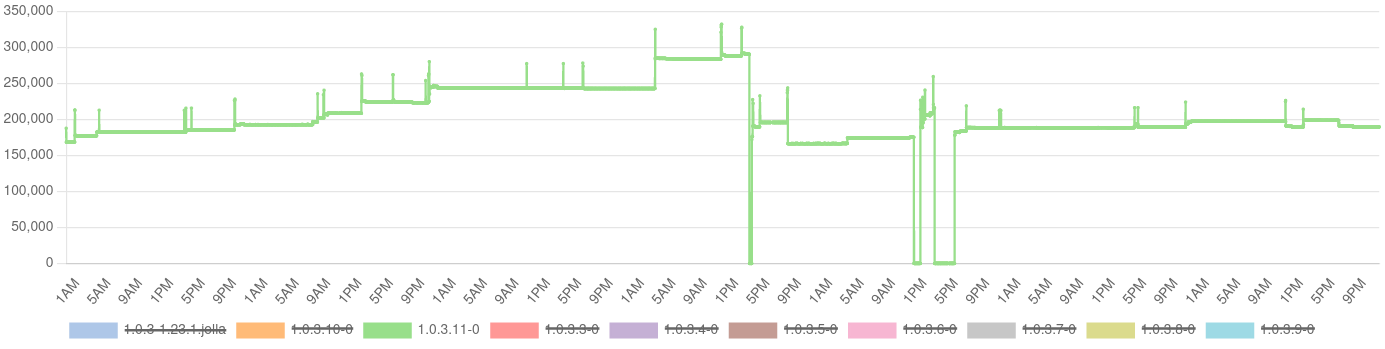

Now, before rushing to the IDE, it would be nice to first have an overview about the current state. Not just to proof I have the leak, but how it actually manifests. Data, in other words. This would allow me also to compare my approaches and also evaluate my intermediate results.

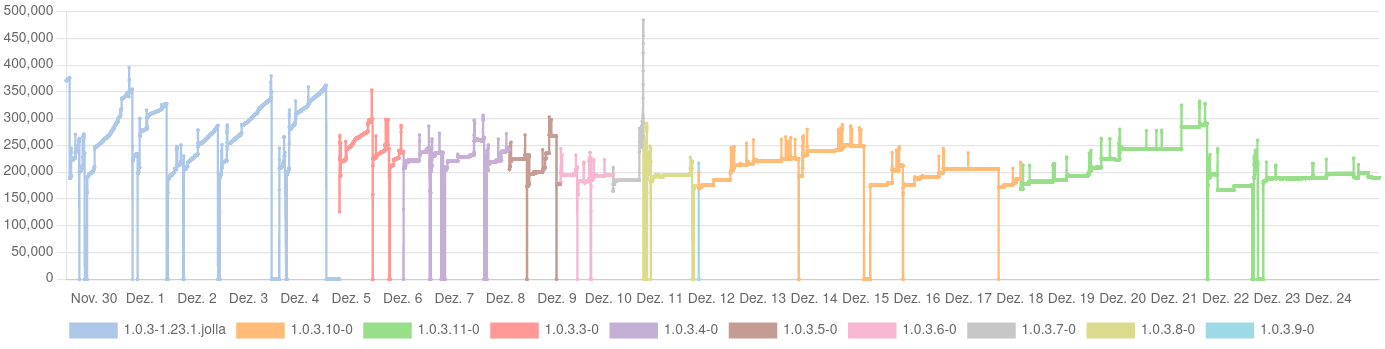

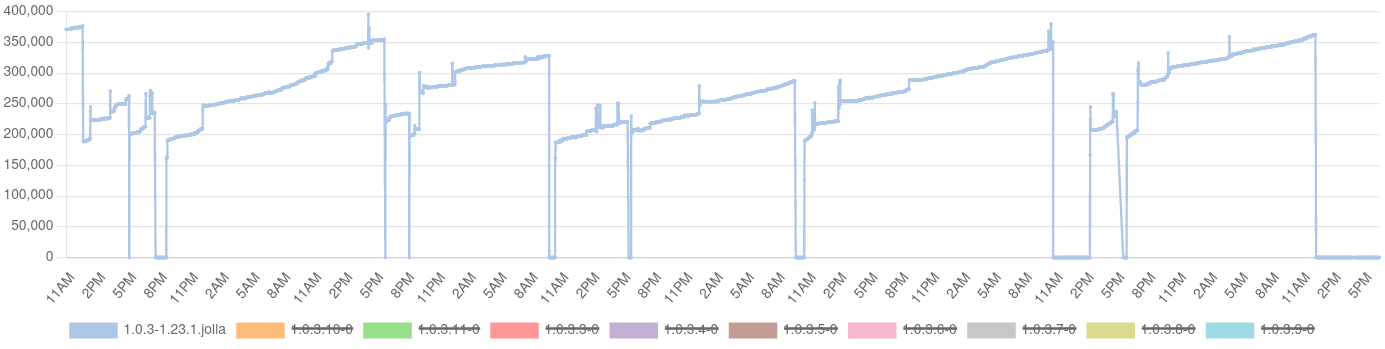

And so at the beginning there was a script. This script would run minutely, controlled by a systemd timer. When it runs, it collects used memory by my app, and uploads it along with the app version to my Nextcloud Analytics. Of course the script could be more complex, but it does its job pretty well, and I had no case to make it more complex. I put into a repository on Codeberg, with brief instructions.

The memory usage is read from the total value of the pmap output, after finding the main process ID. While reading up on this matter, I also stumbled across this blog by Milian Wolff, doing something similar with pmap and gnuplot. This was assuring and inspiring.

The result was: a saw tooth. Memory consumption would go up steeply and linearly. And every time again when starting the app (0 values mark the time when the app is not running).

It is also noteworthy that the app may have stopped for three reasons:

- Killed by the low-memory killer

- Crashed in a network switch case

- Manually stopped (seldom)

On my device, it is quite common that the low-memory killer is taking action, although, frankly, I never actively looked for this (other apps suffer as well, my phone has too little memory). The thing is: with a small memory footprint, this can be made unlikely, too. The crash of 2) would frequently happen when leaving WiFi and going mobile. The typical scenario is going out to pick up C2 from day care.

Iterative fixes

Now that I have both a checklist for the changes, a tool good enough for measuring the RAM usage, and the data to work against, I could take a little slice of time per evening and work on bit by bit to change my code and see the effects it takes.

I was starting with those areas where I expect that most RAM usage is coming from. So I could ensure that I am actually taking steps in the right direction, first, and secondly look out for regressions by dog-fooding.

During the whole process I was having nine builds in action. When I could, i would change a component a night, and do a build. I had regressions, due to changes in event handling: I used to have an instance of QNetworkAccessManager per component and would connect to the finished signal of it. First I wrongly continued with that and tried to differentiate by setting a custom property to the corresponding QNetworkReply, until I figured out that the QNetworkReply actually emits the finished signal itself.

By-catch

While working at the code, and also consuming a good share of Qt documentation, very happily I also figured out where the network-dependent crash happened and could fix it just as well. This was annoying me so much for such a long time, but the crash never left any traces.

Results

I could reduce the average memory consumption by 25%, from more than 266 MB down to about 200 MB. The peak fell from 387 MB down to 325 MB, down about 16%. The median went from 267 MB to 189 MB, reduced by about 30%. A very nasty bug was fixed, disk caching was enabled to reduce some network traffic, and I learnt an awful lot again :) The numbers are of course specific to my usage. I have three accounts connected, two work related and a family one. The main work account is a high traffic one. In off hours I am typically on DND, or have them temporarily disabled.

I am also confident enough to use the oom_adj knob to prevent my app being terminated by the low-memory killer, being one of two apps I actually do not want to be stopped on my smartphone. The commits are are collected in this pull request.

Not all leaks are addressed, however, but the scale is totally different. The saw tooth is gone, the remaining slow rise is not related to QNetworkAccessManager anymore, but apparently not everything is cleaned up when leaving a conversation view (the spikes indicate having entered a conversation).

The changes done here are shipped with version 1.0.4.

Trivia

The other day I have asked Carl for a proof read of this blog (thank you!). It turned out, that he took some of this code for the Tokodon app (a KDE Mastodon client). Eventually, Volker fixed some bits of the networking code, resulting in his aforementioned blog post. And here we are again :D